A voice-controlled robot does not fail only when speech recognition is wrong.

It fails when the whole command loop takes longer than the physical situation can tolerate.

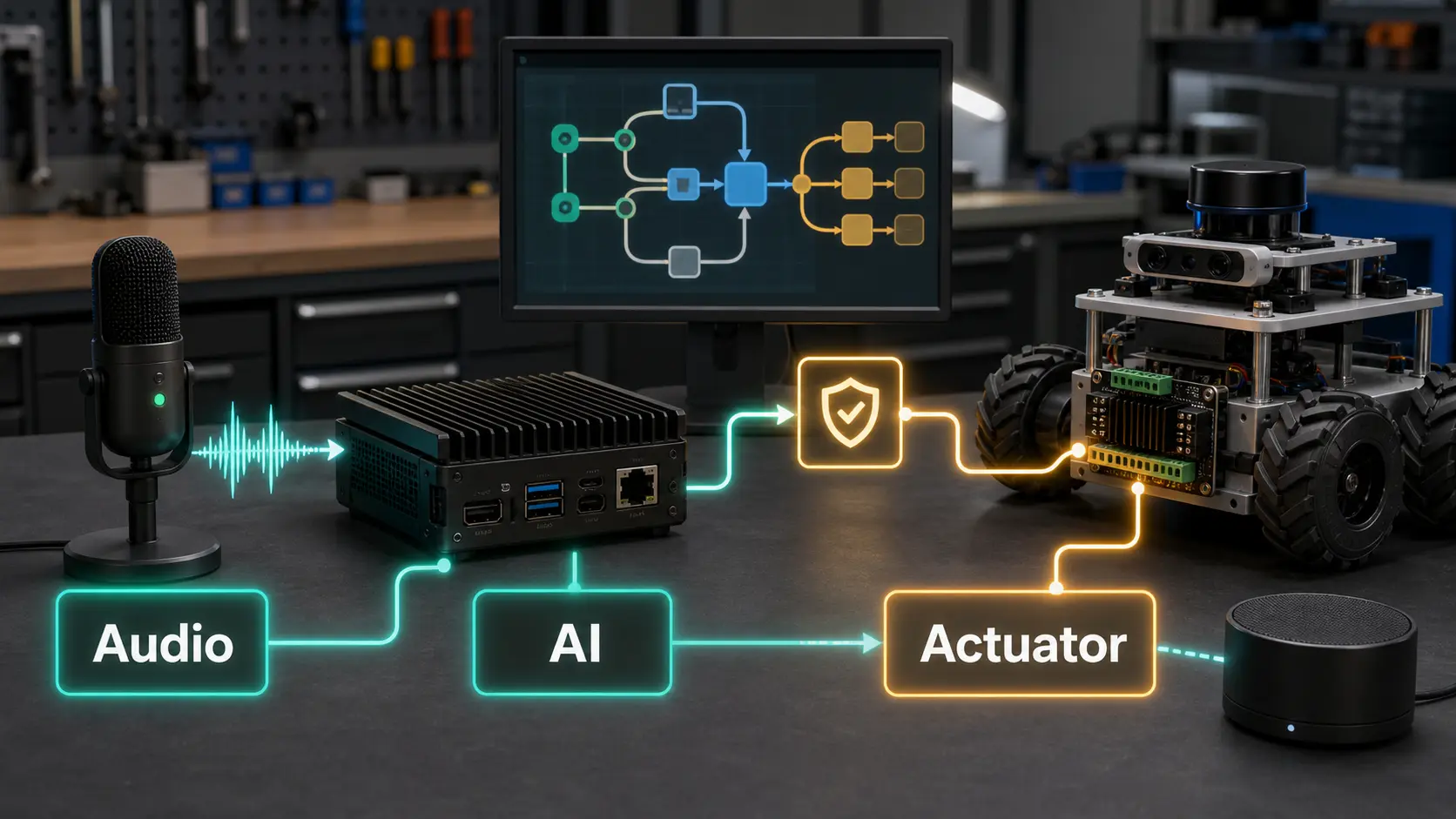

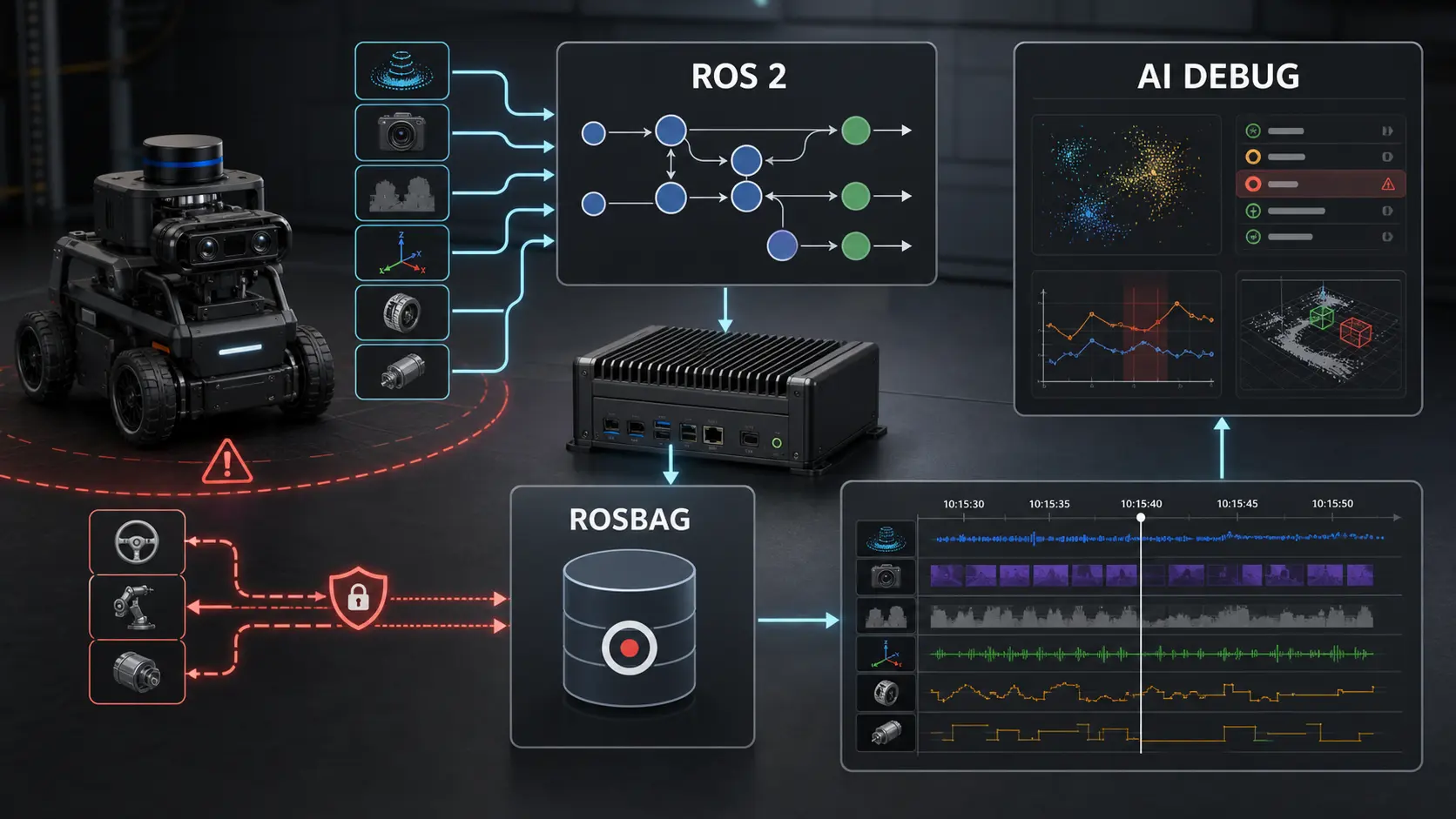

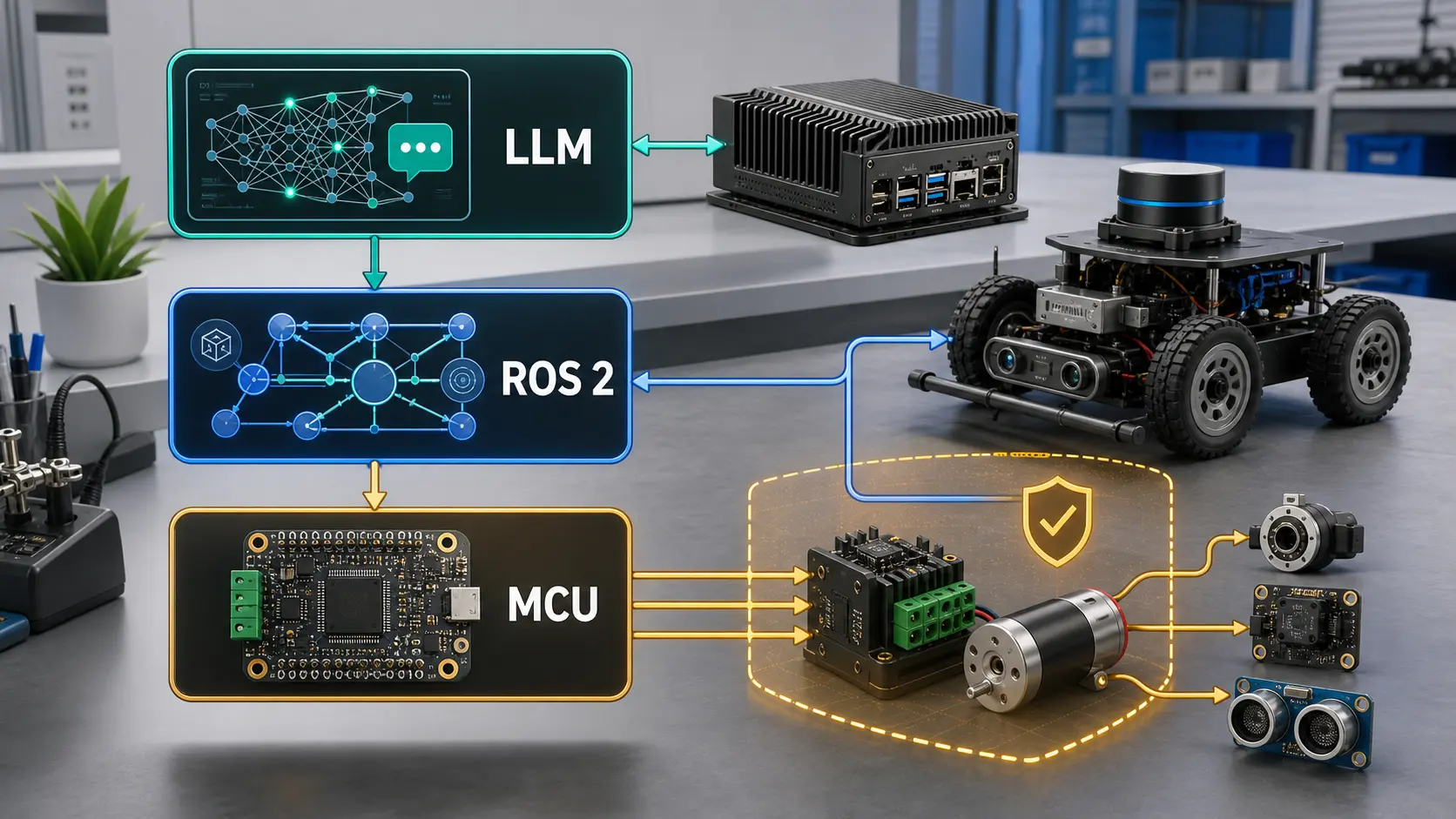

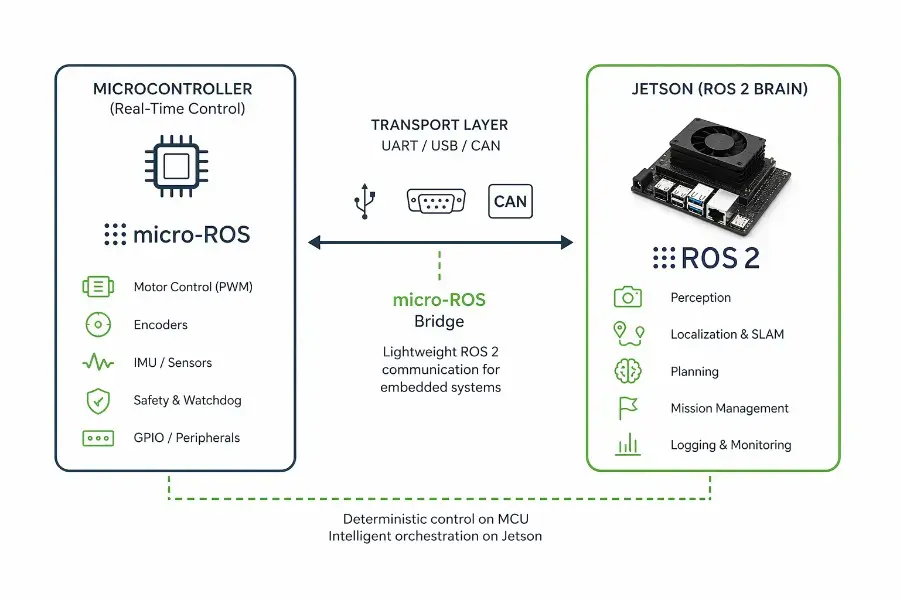

That loop includes wake word detection, voice activity detection, audio buffering, speech-to-text, intent parsing, safety validation, ROS 2 goal dispatch, actuator admission, and user feedback. Each stage can be individually “fast enough” while the combined system still feels sluggish, unsafe, or impossible to debug.