If you are building a real robot, you eventually hit the same architectural wall.

On one side, you have a powerful Linux computer such as an NVIDIA Jetson. It is perfect for perception, planning, orchestration, logging, UI, and all the high-level software that makes a robot feel intelligent.

On the other side, you have the physical world.

And the physical world does not care that your Jetson has GPU acceleration.

Motors still need deterministic update loops. Encoders still need precise timestamping. Safety interlocks still need to fire even when Linux is busy doing something “smart.” GPIOs, UART links, CAN frames, PWM generation, watchdogs, and sensor acquisition all live much closer to the metal.

That is exactly where micro-ROS becomes interesting.

If you already have the low-level building blocks in place, such as UART, GPIO, or CAN bus, but you do not yet have the architectural bridge between a real-time microcontroller and a ROS 2 system on Jetson, micro-ROS is one of the cleanest answers. It is, in practice, ROS 2 for microcontrollers.

And for a cyber-physical system, that makes it a very serious topic.

Why micro-ROS matters in a CPS architecture

I think a lot of robotics projects become messy because teams mix two very different responsibilities:

- high-level reasoning

- low-level deterministic control

That split is not philosophical. It is electrical, temporal, and architectural.

A Jetson should usually own:

- perception

- SLAM or localization

- planning

- mission logic

- speech / VLM / LLM layers

- operator interfaces

- ROS 2 orchestration

A microcontroller should usually own:

- hard or firm real-time loops

- PWM generation

- encoder capture

- local sensor polling

- watchdog behavior

- fault shutdown paths

- actuator state machines

- bus-level device handling

If you try to force all of that into Linux, you eventually rediscover latency, jitter, scheduling delays, driver behavior, and the unpleasant reality that “fast” is not the same thing as “deterministic.”

I already wrote about that distinction in Real-Time Linux for Robotics - Latency, Jitter, Scheduling, and What Actually Breaks. Linux on Jetson can absolutely be real-time enough for many robotics workloads. But it is not magic. For the most timing-sensitive loops, a microcontroller still wins by design.

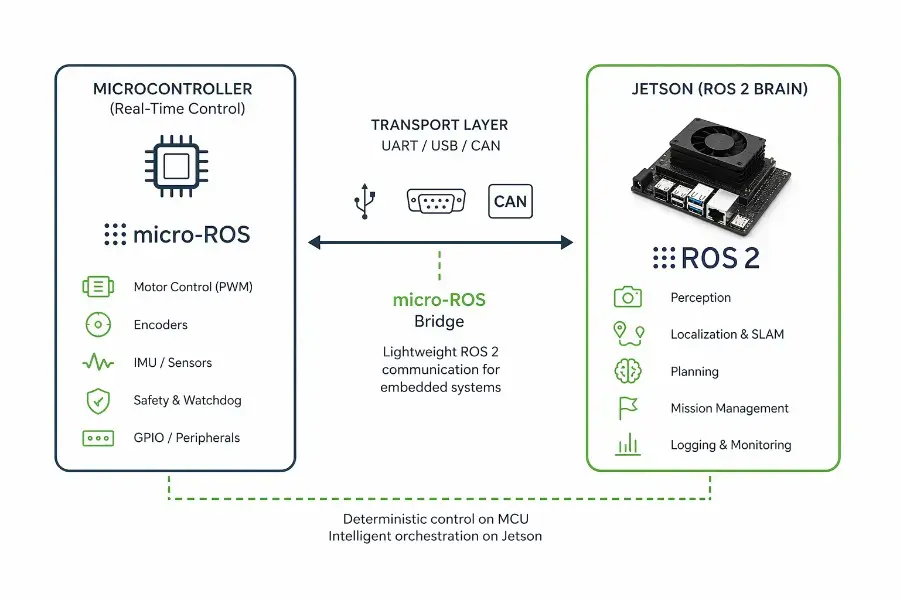

That is why the architecture I trust most in practice looks like this:

- Jetson runs the ROS 2 brain

- MCU runs the real-time body

- micro-ROS makes the MCU a first-class ROS 2 participant

That third point is the real unlock.

What micro-ROS actually is

At a practical level, micro-ROS lets a microcontroller participate in a ROS 2 graph with familiar concepts:

- publishers

- subscribers

- services

- timers

- parameters in some setups

- standard message types

- custom interfaces when needed

It is not “full desktop ROS 2 squeezed into an MCU.” That would be the wrong mental model.

The right mental model is this:

micro-ROS gives you a constrained ROS 2 client stack adapted for deeply embedded systems, so your MCU can speak ROS-native concepts instead of forcing you to invent a custom bridge protocol for every robot.

That matters a lot.

Without micro-ROS, many teams build a homemade protocol between Jetson and MCU over UART, CAN, or USB serial. That can work. I have done it. Sometimes it is even the correct choice.

But those custom bridges age badly.

They start simple:

- one command topic

- one telemetry packet

- one checksum

- one parser

Then the robot grows.

Now you need:

- versioning

- new message types

- state transitions

- debug visibility

- retry logic

- command semantics

- introspection

- better tooling

- integration with the rest of your ROS 2 graph

And suddenly your “lightweight serial bridge” is an undocumented middleware stack that only one person still understands.

micro-ROS is attractive because it moves that bridge back into the ROS 2 ecosystem.

The core idea: the MCU becomes a ROS 2 edge node

This is the shift that makes the architecture cleaner.

Instead of treating the MCU as a dumb peripheral, I prefer to treat it as a deterministic edge compute node.

That MCU can now expose meaningful ROS-side behavior such as:

- publishing wheel encoder ticks

- subscribing to motor setpoints

- publishing IMU data

- exposing a service for actuator homing

- reporting heartbeat and fault status

- driving local safety state machines

- timestamping sensor events close to hardware

The Jetson remains the system brain, but the MCU is no longer an opaque black box behind an ad hoc parser. It becomes a structured subsystem inside the overall ROS 2 architecture.

If you have read my article on ROS 2 Architecture Patterns That Scale, this should feel familiar. Good robotics systems scale when boundaries are explicit. micro-ROS is valuable because it makes the boundary between embedded control and ROS orchestration much more explicit.

Where micro-ROS fits in a Jetson-based robot

Let me make this concrete.

Imagine a robot built around an NVIDIA Jetson Orin Nano.

The Jetson runs:

- ROS 2

- camera drivers

- perception

- navigation

- voice or AI modules

- mission logic

- logging

- dashboards

A microcontroller board runs:

- motor PWM

- quadrature encoder capture

- e-stop monitoring

- current sensing

- local LED / relay control

- time-critical polling

- actuator watchdog logic

The Jetson and MCU communicate through one of several transports:

- UART

- USB CDC serial

- CAN

- Ethernet in some advanced cases

At that point, micro-ROS lets you keep the physical separation without losing ROS-native integration.

This is especially compelling on Jetson because Jetson is excellent at high-level compute, but it is not the platform I want to trust alone for the most timing-sensitive loops.

Before micro-ROS: you still need the physical transport right

micro-ROS is not a substitute for electrical engineering.

You still need a real transport underneath it.

That is why the low-level work matters first.

If you are coming from a Jetson-first robotics stack, these earlier foundations are not optional:

- UART protocol - Theory + Hands-On on Nvidia Jetson Orin Nano

- Enabling GPIO Output Pins on Nvidia Jetson Orin Nano Super

- CAN Bus vs UART vs I2C vs SPI in Robotics - Which One Should You Use?

That is the physical layer of the editorial bridge.

Because micro-ROS does not eliminate questions like:

- Is my UART voltage level correct?

- Is this link robust enough for field wiring?

- Should this actuator network really be on CAN instead of serial?

- Where does timestamping happen?

- What fails safely if the Jetson reboots?

- What happens when the transport stalls?

If those questions are unresolved, adding ROS abstractions on top does not save you.

It just hides the instability under better vocabulary.

micro-ROS vs a custom UART bridge

This is the question I would ask first in any real project:

Should I use micro-ROS, or should I just build a custom serial protocol?

My answer is: it depends on how “ROS-native” the subsystem needs to become.

A custom bridge is often enough when

- the MCU only exposes a very small command set

- message semantics are simple and stable

- you want total control over framing and bandwidth

- you are optimizing for minimal footprint

- the subsystem is intentionally isolated

Example:

a tiny board that only accepts set_motor_pwm(left, right) and returns a compact telemetry packet.

That can be perfectly fine.

micro-ROS is usually better when

- the MCU should behave like a ROS-aware subsystem

- you want structured publishers / subscribers / services

- you expect the robot to grow in complexity

- you want cleaner integration with the ROS 2 graph

- observability and maintainability matter more than absolute minimalism

- multiple engineers will touch the system over time

In other words:

a custom bridge is often optimal for a narrow device

micro-ROS is often optimal for a growing robotic subsystem

I would not frame this as ideology. It is a lifecycle decision.

Why micro-ROS is a strong fit for cyber-physical systems

CPS engineering is all about where software meets timing, sensing, actuation, energy, and failure modes.

micro-ROS fits that world well because it respects a reality that pure software people often underestimate:

not every node should run on Linux, but the whole system still needs one coherent language.

ROS 2 is that language for many robot stacks.

micro-ROS extends that language into embedded hardware.

That gives you a much cleaner distributed system model:

- Linux nodes for rich compute

- MCU nodes for deterministic interaction with hardware

- one architectural vocabulary across both worlds

This is exactly why I consider micro-ROS a pure CPS subject rather than “just another embedded framework.”

It is not only about code reuse.

It is about aligning control boundaries, timing boundaries, and software boundaries.

A practical architecture pattern that I like

Here is a pattern that tends to age well.

On the Jetson side

Run high-level ROS 2 nodes such as:

- perception

- localization

- planning

- mission supervisor

- UI / telemetry bridge

- logging / rosbag

- diagnostics aggregator

On the MCU side with micro-ROS

Run narrowly scoped embedded nodes such as:

- motor controller interface

- encoder publisher

- IMU publisher

- bumper / limit switch monitor

- power and fault monitor

- actuator homing service

Between them

Use a transport chosen for the actual robot constraints:

- UART / USB serial for simpler point-to-point systems

- CAN when robustness, distributed nodes, and noise tolerance matter more

- Ethernet only when the embedded target and system design justify it

Most important design rule

Keep the MCU responsible for local deterministic behavior, not just I/O forwarding.

That means the MCU should not merely relay bytes.

It should own real embedded logic such as:

- enforcing actuator limits

- timing control loops

- filtering local faults

- applying watchdog timeouts

- refusing unsafe commands

- keeping the plant stable during transient Jetson-side issues

That is the difference between “an MCU connected to ROS” and “a properly engineered embedded control tier.”

What should stay on the microcontroller

This is where many architectures fail.

Once people get ROS messages flowing, they are tempted to push too much logic upward to the Jetson.

I think that is usually a mistake.

The following should generally stay on the MCU:

1. Fast control loops

If a loop has strict timing requirements, keep it local.

Examples:

- motor current loop

- wheel velocity loop

- servo update loop

- edge-triggered safety stop response

2. Hardware-near acquisition

Anything that benefits from precise hardware timing should stay close to the pins:

- encoder counting

- pulse width measurement

- timestamped interrupts

- deterministic ADC sampling sequences

3. Safety fallbacks

If the Jetson crashes, reboots, hangs, or restarts containers, the robot should degrade safely.

That means local logic should be able to do things like:

- cut actuation after heartbeat loss

- hold brakes

- stop motion

- enforce rate limits

- reject invalid command ranges

4. Bus management

If the MCU is directly interfacing with CAN nodes, motor drivers, or field devices, it often makes sense for it to manage those transactions locally and publish higher-level state upward.

What should stay on the Jetson

The Jetson should own what it is uniquely good at.

Examples:

- multi-sensor fusion

- computer vision

- semantic reasoning

- route planning

- behavior trees

- voice interfaces

- global mission state

- logging and replay

- operator tooling

- fleet or cloud connectivity

This division keeps the system honest.

The MCU keeps the robot physically stable.

The Jetson keeps the robot behaviorally intelligent.

That is a healthy architecture.

Transport choice: UART or CAN for micro-ROS?

There is no universal answer, but there is a useful rule of thumb.

UART is attractive when

- your topology is simple

- one Jetson talks to one MCU

- cable distances are short

- cost and simplicity matter

- you want the fastest path to a working prototype

For many early robot builds, UART is the obvious first step. It is cheap, common, and easy to debug with logic analyzers and serial tools.

CAN is attractive when

- the robot is electrically noisy

- wiring is longer or more distributed

- multiple embedded nodes may share the bus

- fault tolerance matters more

- you want a fieldbus mindset rather than a direct point-to-point link

For mobile robots, industrial environments, and distributed actuator setups, CAN often feels more “native” to the physical system.

If you are still deciding between these buses, my full trade-off breakdown is here: CAN Bus vs UART vs I2C vs SPI in Robotics - Which One Should You Use?

micro-ROS does not remove real-time thinking

This part is important enough to state plainly:

micro-ROS is not a shortcut around real-time systems engineering.

It does not automatically guarantee:

- bounded latency

- deterministic scheduling

- safe control partitioning

- fault containment

- deadline satisfaction

You still need to define those explicitly.

For example:

- what heartbeat timeout stops the motors?

- what happens if the Jetson misses 300 ms of commands?

- what happens if the MCU reboots mid-motion?

- what data is best-effort telemetry versus control-critical?

- what command path is authoritative?

- where is the safety state machine implemented?

- where are timestamps generated?

These are CPS questions before they are ROS questions.

micro-ROS helps the software architecture. It does not erase the physics.

A good first micro-ROS use case on Jetson

If I had to choose a first serious but manageable use case, I would pick this:

Differential drive base controller

The MCU handles:

- left/right motor control loops

- encoder reading

- heartbeat watchdog

- current or fault monitoring

- odometry primitives at the hardware-near level

The Jetson handles:

- velocity command generation

- navigation

- mapping

- obstacle avoidance

- higher-level state machines

- logging and visualization

micro-ROS becomes the structured interface between the two.

This is a very natural architecture because the separation is clean:

- Jetson decides where to go

- MCU decides how to drive the actuators safely right now

That is exactly the kind of split that tends to survive contact with reality.

Where this fits with Isaac ROS and Jetson AI stacks

If your Jetson is already running a modern ROS 2 stack, especially on NVIDIA hardware, micro-ROS fits naturally underneath it.

That includes systems where Jetson is already used for:

- camera pipelines

- accelerated inference

- depth processing

- visual SLAM

- AI interaction layers

If that is your world, these articles are directly adjacent:

- ROS 2.0 and Isaac ROS on NVIDIA Jetson Orin Nano Super

- Containerizing Robotic Systems Without Losing Your Mind

- Running Piper TTS in ROS 2 on NVIDIA Jetson Orin Nano with Low Latency

The important point is this:

As the Jetson side becomes richer and more complex, the value of having a clean embedded ROS edge usually goes up, not down.

Because complexity at the top makes clean boundaries at the bottom even more important.

Common mistake: treating the MCU like a transparent wire adapter

I see this a lot.

A team adds a microcontroller, but architecturally it is still treated as nothing more than:

- a UART parser

- a pin expander

- a “thing that forwards sensor bytes”

That leaves a lot of value on the table.

A better mindset is to treat the MCU as a real subsystem with local authority.

That means:

- it owns timing where timing matters

- it owns fault behavior where fault behavior must be immediate

- it exports structured state upward

- it refuses unsafe commands locally

- it remains useful even if the Jetson is degraded

Once you think that way, micro-ROS starts making much more sense.

Because now you are not just exposing registers.

You are exposing a meaningful embedded subsystem into the ROS graph.

SEO question people actually ask: when should I use micro-ROS?

Here is my honest answer.

Use micro-ROS when all of these are becoming true:

- your robot already speaks ROS 2 on the high-level side

- your low-level hardware needs an MCU anyway

- you are tired of ad hoc serial protocols aging badly

- you want the MCU to expose structured ROS concepts

- your system is growing beyond a one-off prototype

- maintainability matters as much as getting bytes across

Do not assume you need micro-ROS if your robot is tiny and static.

A minimalist serial bridge can still be the right engineering choice.

But once the embedded layer becomes a durable subsystem rather than a throwaway helper board, micro-ROS becomes much more compelling.

The editorial bridge, in one sentence

If UART, GPIO, and CAN are the physical bricks, then micro-ROS is the architectural grammar that lets those bricks join a ROS 2 robot cleanly.

That is why I think it deserves its own place in a Jetson-centric robotics blog.

It is the missing bridge between:

- bare-metal hardware control

- Linux-based robotic intelligence

And for cyber-physical systems, that bridge is exactly where the interesting engineering lives.

Final thoughts

micro-ROS is not exciting because it is fashionable.

It is exciting because it solves a very concrete systems problem:

how do I connect deterministic embedded control to a ROS 2 brain without turning the boundary into protocol spaghetti?

For me, that is the real value proposition.

On Jetson, I want Linux and ROS 2 for everything they are good at:

perception, orchestration, AI, planning, observability, and system-level behavior.

But I still want microcontrollers for everything they are better at:

tight loops, hardware-near timing, watchdogs, local safety, and electrical reality.

micro-ROS is powerful because it allows both worlds to stay good at what they do best, while still participating in one coherent robotics architecture.

That is not just convenient.

That is good CPS design.

Related articles

- UART protocol - Theory + Hands-On on Nvidia Jetson Orin Nano

- Enabling GPIO Output Pins on Nvidia Jetson Orin Nano Super

- CAN Bus vs UART vs I2C vs SPI in Robotics - Which One Should You Use?

- ROS 2 Architecture Patterns That Scale

- Real-Time Linux for Robotics - Latency, Jitter, Scheduling, and What Actually Breaks

- ROS 2.0 and Isaac ROS on NVIDIA Jetson Orin Nano Super