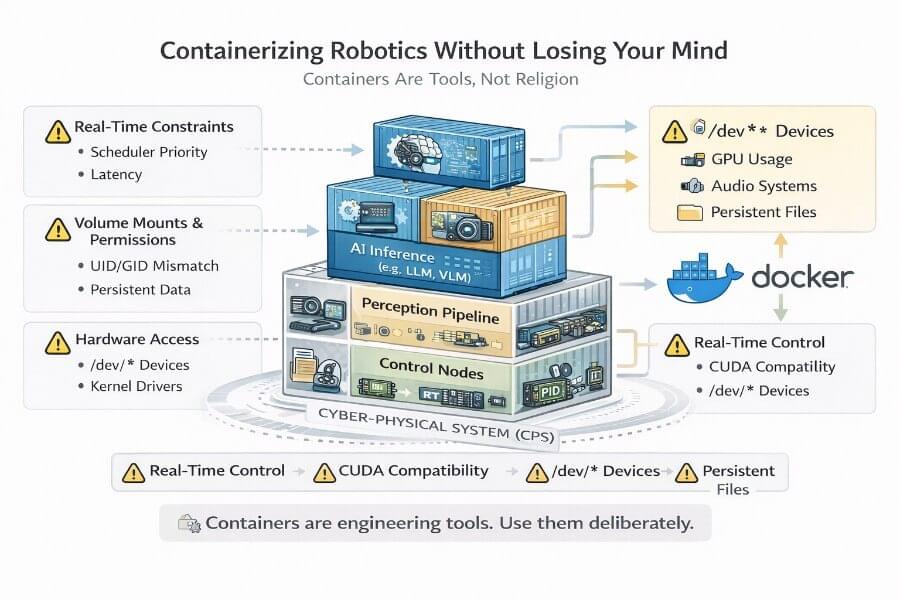

Containers Are Tools, Not Religion in Cyber Physical Systems

Containerization has become almost ideological in modern software engineering. In web infrastructure, “just Dockerize it” is often the correct answer. In robotics, that mindset can either save you months of pain — or create subtle, catastrophic problems that only appear under load, in the field, or during a live demo.

Robotics is not backend development. A robot is not a stateless service. A robot is a Cyber-Physical System (CPS).

A CPS integrates:

Software

Operating system

Kernel drivers

Real-time scheduling

Physical actuators

Sensors

Power systems

Thermal constraints

Timing guarantees

Cyber-physical systems are composed of computational and physical elements, with a deep integration between these components that enables advanced functionalities.

When you containerize robotics, you are not just isolating Python dependencies. You are inserting an abstraction layer between computation and physics. In CPS, computational elements interact closely with physical elements, and these components are deeply intertwined, enabling advanced system capabilities. In fact, in cyber-physical systems, physical and software components are deeply intertwined, able to operate on different spatial and temporal scales.

This article goes deep into:

What to containerize (and what absolutely not to)

GPU access and CUDA alignment on Jetson

Real-time constraints and scheduler implications

Volume mounts, UID/GID pitfalls, and persistence strategy

Hardware access from inside containers

Debugging methodology when everything breaks

Designing a sane, production-grade container architecture

The thesis is simple:

Containers are tools. They are not a religion. Use them deliberately, not dogmatically.

1. Robotics Is Not Cloud Infrastructure

To understand containerization in robotics, you must first understand why robotics behaves differently from typical distributed systems.

In cloud systems:

Hardware is abstracted

Latency variations are tolerable

Workloads are mostly stateless

Network jitter is manageable

Failures can often be retried

Cloud environments benefit from flexible network connectivity, enabling scalable processing and resource management, which is less critical in robotics where real-time constraints dominate.

In robotics:

Hardware is the system

Latency destabilizes controllers

State is continuous and physical

Deadlines are real

Failures can break hardware or injure people

A robotic stack touches:

/dev/video* (cameras)

/dev/tty* (microcontrollers, motor boards)

/dev/i2c-* (sensors)

/dev/gpiochip*

/dev/snd (audio)

/dev/nvhost* (Jetson GPU)

Shared memory segments

Kernel scheduling policies

You are not orchestrating microservices.

You are orchestrating electrons.

Containers add isolation layers:

Namespaces

Cgroups

Virtual networking

Filesystem overlays

These abstractions are powerful — but they are not free.

2. What to Containerize — and Why

The question is not “Should I use Docker?”

The question is:

Which layers of the CPS benefit from containerization, and which layers should remain close to the metal? These layers include software components, hardware and software integration, and control systems.

2.1 AI Inference Services

LLM servers, VLM inference, speech recognition, and TTS systems are excellent candidates for containerization. These services leverage artificial intelligence and AI models to perform complex decision making tasks, making them well-suited for containerized deployment.

Why?

Because they:

Have heavy dependency trees

Require specific CUDA/TensorRT builds

Change frequently

Are logically separable from control loops

For example:

Whisper with CUDA

A TensorRT-optimized YOLO detector

A local LLM server

Containers allow:

Version pinning

Easy rollback

Isolation of Python/conda chaos

Deployment reproducibility

These systems typically operate at:

0.1–10 Hz

Soft real-time constraints

This makes them ideal container candidates.

2.2 Perception Pipelines

Computer vision pipelines often depend on:

OpenCV builds

CUDA versions

TensorRT engines

Custom compiled ROS packages

These pipelines process image data and multimodal inputs to recognize objects in real time, which is critical for robotic perception.

Without containers, dependency drift becomes inevitable.

However:

Perception can be moderately latency sensitive. You must monitor:

Frame drops

DDS overhead

Shared memory transport

Containerizing perception is usually beneficial — but you must benchmark it.

2.3 Simulation and Reinforcement Learning

Simulation stacks (Gazebo, Isaac Sim) are dependency-heavy. These platforms create a simulated environment or virtual space—a digital environment or digital world—where training physical AI and machine learning models is performed using synthetic data.

RL training environments depend on:

Physics engines

CUDA

Python ML frameworks

Training physical AI in these simulated environments is essential for developing robust autonomous systems.

Containers shine here.

They allow:

Experiment isolation

Deterministic training environments

CI reproducibility

Simulation is often compute-bound, not hardware-interfacing.

This makes it container-friendly.

3. What You Should Be Extremely Careful About

Some components do not belong casually inside containers.

3.1 Hard Real-Time Control Loops

Motor control loops running at:

500 Hz

1 kHz

10 kHz

must be deterministic.

Containers introduce:

Scheduler indirection

Cgroup constraints

Potential CPU sharing

Added jitter

For stable motor control:

Firmware-level control is ideal

RT-preempt kernels are preferable

Deterministic scheduling is mandatory

Reliable control algorithms are essential for maintaining system stability

Running a torque loop inside a generic Docker container is architectural negligence.

3.2 Low-Level Hardware Drivers

When developing:

Custom I2C timing routines

SPI communication

GPIO bit-level control

Containers complicate:

Device permissions

udev interactions

Debugging at kernel level

If you are debugging drivers, stay on the host.

4. GPU Access on Jetson: Where Theory Meets Reality

Jetson devices (Orin Nano, Xavier, etc.) differ from desktop GPUs.

They have:

Integrated GPUs, which provide the computational power necessary for advanced AI workloads in robotics

Shared memory

JetPack-tied CUDA stacks

Strict driver alignment

Unlike x86 + NVIDIA desktop setups, Jetson requires:

Exact CUDA compatibility

L4T-aligned container bases

Correct runtime configuration

4.1 NVIDIA Container Runtime

You must run:

1 | --runtime nvidia |

or configure Docker’s default runtime accordingly.

If you forget:

CUDA won’t be visible

TensorRT won’t initialize

Inference will silently fall back to CPU

On Jetson, debugging GPU inside containers can be confusing because nvidia-smi is not always available.

Use:

1 | tegrastats |

to verify GPU usage.

4.2 CUDA and JetPack Alignment

JetPack versions tightly couple:

Kernel

CUDA

TensorRT

Drivers

If your container ships CUDA 12.3 but host runs CUDA 12.2, things break subtly.

Best practice:

Use L4T-based images aligned to your JetPack

Avoid generic nvidia/cuda tags unless verified

Keep a version compatibility table

In robotics, GPU mismatches don’t just crash — they degrade performance unpredictably.

5. Real-Time Constraints and Containers

Containers introduce CPU isolation via cgroups.

This affects:

Scheduling priority

CPU pinning

Latency jitter

In robotics applications, real time feedback is essential for maintaining system responsiveness, making it critical to minimize latency and jitter when using containers.

5.1 Real-Time Scheduling

Robotics often uses:

SCHED_FIFO

SCHED_RR

Inside Docker, you must grant:

1 | --cap-add=sys_nice |

Otherwise:

Real-time priorities silently fail

Your control loop becomes best-effort

This may work in the lab — and fail under load.

5.2 Shared Memory and ROS 2 DDS

ROS 2 DDS can use shared memory transport.

Without:

1 | --ipc=host |

you may see:

Increased latency

Missed messages

Slow discovery

Containers default to network namespaces that break DDS auto-discovery.

This is one of the most common ROS 2 container pitfalls.

5.3 CPU Isolation and Determinism

For performance-critical workloads:

Pin CPUs using –cpuset-cpus

Avoid overcommitting cores

Monitor latency with tools like cyclictest

Containers do not magically optimize scheduling.

They can degrade it.

6. Volume Mounts and Permission Architecture

Most robotics Docker pain comes from filesystems.

Robots generate:

Logs

rosbag files

Calibration data

Model weights

Configuration files

Effective data collection from various data sources, such as embedded mobile sensors and networked devices, is critical for robotic systems. IoT devices, such as sensors and cameras, are essential data collection points in robotic systems, integrating diverse sensor inputs for comprehensive environmental understanding. The data collected during operations is essential for system improvement, analysis, and continuous learning.

If you store these inside the container filesystem:

They disappear when the container is removed.

Always use bind mounts.

6.1 UID/GID Mismatch

If host user:

1 | uid=1000 |

and container user:

1 | uid=1001 |

mounted volumes will break.

Solutions:

Match UID/GID at build time

Use –user $(id -u):$(id -g)

Create container user with same IDs

Ignoring this results in hours of chmod chaos.

7. Hardware Access Inside Containers

Robots depend on /dev.

Docker isolates /dev unless configured.

7.1 USB Devices

Expose explicitly:

1 | --device /dev/ttyUSB0 |

Avoid –privileged unless necessary.

--privileged disables isolation and should not be default.

7.2 GPIO on Jetson

Expose:

1 | --device /dev/gpiochip0 |

If using libgpiod, verify device presence inside container.

GPIO debugging inside Docker is subtle because permission errors look like runtime errors.

7.3 Audio Systems

For speech systems:

Mount PulseAudio socket:

1 | -v /run/user/1000/pulse:/run/user/1000/pulse |

Export:

1 | PULSE_SERVER |

Audio inside containers is notoriously brittle.

Test incrementally.

8. Debugging Strategy When Everything Breaks

When a robotic container fails, do not panic.

Follow a layered approach.

Verify hardware visibility (ls /dev)

Verify GPU availability (tegrastats)

Verify permissions

Verify network namespace

Verify ROS_DOMAIN_ID

Verify shared memory

Benchmark latency

Never debug all layers simultaneously.

Peel back abstraction layers.

Note: Human oversight is essential during troubleshooting to supervise and validate autonomous system behavior, ensuring safety and reliability throughout the debugging process.

9. A Production-Grade Architecture Strategy

A sane robotics container architecture might look like:

Reference architectures for physical AI systems and autonomous systems provide essential guidance for designing robust, scalable deployments. A physical AI model combines hardware and software to understand, reason about, and interact with the physical world, leveraging sensors, spatial data, and real-time input. These systems are tightly integrated, with close coordination between physical components and computational elements to ensure seamless operation. These architectures outline standardized models and best practices for integrating hardware and software, enabling autonomous systems to operate, learn, and adapt within real-world environments.

Physical AI systems integrate hardware and software to interact with the physical world through sensors and actuators.

Host OS

Kernel drivers

Firmware flashing tools

Hardware-level services

automatic pilot avionics

medical monitoring

Container A

- AI inference: This container is responsible for running inference tasks, which may include deploying AI agents and running physical AI models for real-world decision-making and autonomous interactions.

Container B

- Perception

Perception containers are essential for enabling robots to interpret and interact with their environment. In particular, perception containers are critical for industrial robots, as they allow these machines to process sensor data and make real-time decisions in manufacturing and automation settings. This reflects the evolving robotics focuses in modern automation, where the integration of AI models with mechanical systems and control mechanisms enables robots to move beyond rule-based behaviors and incorporate advanced understanding, simulation, and adaptability.

Host or RT container

Control loops

Motor interfaces

Separation by responsibility — not ideology.

These control loops are essential for enabling robots to manipulate objects, operate autonomously, and support the deployment of autonomous robots in real-world environments.

10. Anti-Patterns to Avoid

❌ One giant monolithic container

❌ Ignoring real-time constraints

❌ Random CUDA versions

❌ –privileged everywhere

❌ Storing persistent state inside container

❌ Not benchmarking latency

Containers simplify deployment.

They do not eliminate systems engineering. As best practices evolve in robotics containerization, it is increasingly common to see new capabilities emerge, enabling more advanced and adaptable robotic systems.

Conclusion

Containerization in robotics is powerful — but robotics is not SaaS.

Robots are CPS systems governed by:

Physics

Timing

Determinism

Safety

Physical AI and physical AI work are transforming robotics, enabling autonomous machines, mobile robots, self-driving cars, and autonomous vehicles to interact with digital twins and leverage real world sensor data.

Containers help with:

Reproducibility

Dependency isolation

Deployment consistency

They require discipline for:

Real-time systems

Hardware access

GPU integration

Permissions

Understanding spatial relationships, navigating uneven terrain, and adapting to complex environments and weather conditions are critical challenges addressed by integrating the digital and physical worlds in modern robotics.

The engineers who succeed will not be those who containerize everything blindly.

They will be those who understand:

Where isolation helps

Where determinism matters

Where physics overrides abstraction

Containers are tools.

Use them with architectural intent.

Not faith.