Google publicly announced Gemma 4 on April 2, 2026, while Google’s own Gemma release log lists March 31, 2026 as the checkpoint release date. The date discrepancy is minor. The strategic point is not.

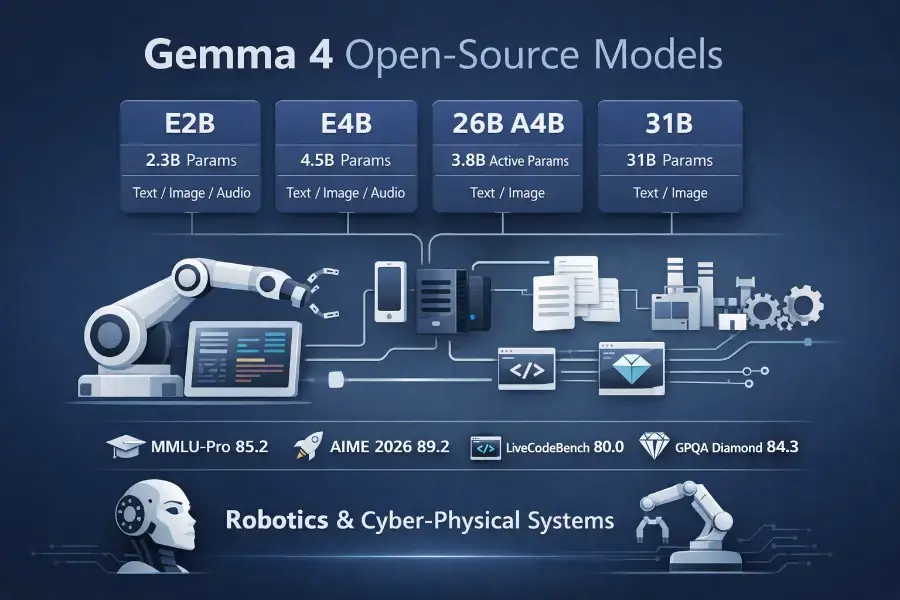

Gemma 4 is a new family of Apache 2.0-licensed open-weight multimodal models spanning E2B, E4B, 26B A4B, and 31B variants, with context windows up to 256K tokens and a clear emphasis on local deployment, agentic workflows, and edge execution. This is not just another model drop. It is one of the clearest signals yet that high-end open AI is moving toward systems that run on your hardware, inside your products, and eventually inside real machines.

What makes this release important is not only the benchmark story. It is the coherence of the whole package: permissive licensing, real multimodality, long context, native tool use, deployment paths from phones to workstations, and a model family that actually looks designed around inference constraints instead of benchmark theater. If you care about local coding agents, private enterprise copilots, multimodal assistants, or AI embedded into cyber-physical systems, Gemma 4 is worth paying attention to.

TL;DR

- Gemma 4 is Google DeepMind’s newest family of open-weight multimodal models, released under Apache 2.0, with four sizes: E2B, E4B, 26B A4B, and 31B.

- The most technically interesting checkpoint may be the 26B A4B MoE model, because it gets close to the 31B flagship while activating only 3.8B parameters at inference.

- The small models are built for on-device deployment, with audio support, variable-resolution vision, and 128K context; the larger models target local-first workstations and advanced reasoning workloads.

- Gemma 4 is especially compelling for local coding, document intelligence, private enterprise AI, and edge assistants.

- For robotics and cyber-physical systems, Gemma 4 is best understood as a semantic planning and tool-use layer, not as a low-level control model.

Why this release matters

I have been writing for months that the interesting future of AI is not just “better chat,” but AI embedded into real systems with timing constraints, hardware limits, privacy boundaries, and operational consequences. That is the direction I described in Physical AI Explained and in my piece on the real role of LLMs in cyber-physical systems. Gemma 4 fits that trajectory unusually well.

Google is explicitly positioning the E2B and E4B models for phones, Raspberry Pi, and edge hardware, while the 26B and 31B variants are pitched for advanced reasoning, IDEs, coding assistants, and agentic workflows on consumer GPUs. In other words, the product thesis is no longer “here is a huge open model, now figure out how to shrink it.” The thesis is “here is a family designed from the start to live across devices, workstations, and embedded systems.” That is a much more important shift than it may look at first glance.

What Gemma 4 actually is

At the family level, Gemma 4 is more interesting than a single flagship. According to Google’s official Gemma 4 model card, the lineup looks like this:

| Model | Architecture | Effective / active params | Context | Modalities | Best fit |

|---|---|---|---|---|---|

| Gemma 4 E2B | Dense + PLE | 2.3B effective | 128K | Text, image, audio | Mobile, edge, offline assistants |

| Gemma 4 E4B | Dense + PLE | 4.5B effective | 128K | Text, image, audio | Stronger on-device apps, multimodal edge workflows |

| Gemma 4 26B A4B | MoE | 25.2B total / 3.8B active | 256K | Text, image | Efficient workstation/server inference |

| Gemma 4 31B | Dense | 30.7B | 256K | Text, image | Highest-quality local reasoning and coding |

That design split matters. The 31B model is the dense flagship. The 26B A4B model is the efficiency play. The E2B and E4B models are not “small versions” in the lazy sense. They are on-device models built with architectural decisions that specifically optimize local deployment.

The family is multimodal, but not symmetrically so. All variants take text and image input and generate text output. The small E2B and E4B models also support audio. All models can process video as sequences of frames. Google states that audio input is capped at 30 seconds and video at 60 seconds, assuming one frame per second. So yes, Gemma 4 is multimodal, but in a bounded, engineering-oriented way rather than in a maximalist “universal omni model” way.

The licensing shift is also strategic. Google’s Open Source blog announcement describes Gemma 4 as the first Gemmaverse release under the OSI-approved Apache 2.0 license. That removes a lot of downstream hesitation for teams that care about licensing clarity, governance, customization, and local operation.

The real story is architecture, not just size

Technically, Gemma 4 is interesting because it looks like a model family designed by people who care about inference physics.

1) Hybrid attention for long context without full quadratic pain

Gemma 4 alternates local sliding-window attention and full global attention. In the official model card, Google says the small dense models use 512-token sliding windows, while the 31B dense model and the 26B A4B MoE model use 1024-token sliding windows. The family still reaches 128K context on E2B and E4B and 256K context on 26B A4B and 31B.

That is the right kind of compromise for deployable long-context models. Full global attention everywhere is expensive. Purely local attention can break long-range reasoning. Alternating local and global layers is the kind of practical systems choice that often matters more than headline parameter count.

2) Per-Layer Embeddings make the small models more interesting than they look

The “E” in E2B and E4B stands for effective parameters. Google explains that the smaller models use Per-Layer Embeddings (PLE), where each decoder layer gets its own small embedding for every token. That helps them pack more useful representation capacity into an on-device footprint. It is also why Google distinguishes between the effective parameter count and the larger total including embeddings.

This matters because small local models usually fail for one of two reasons: not enough capacity, or not enough efficiency. PLE is Google’s answer to that trade-off. It is one of the key reasons E2B and E4B should be taken more seriously than a generic “2B model” or “4B model” label would suggest.

3) The 26B A4B model is probably the sweet spot

The 26B A4B model uses a Mixture-of-Experts design with 25.2B total parameters but only 3.8B active at inference, with 8 active experts, 128 total experts, and one shared expert. This is the economically interesting checkpoint in the family.

In practical terms, it means Gemma 4 has a model that behaves much closer to a frontier-adjacent workstation model than its runtime cost would suggest. If the 31B model is the quality ceiling, the 26B A4B model is the one many builders will actually deploy.

4) Variable-resolution vision is a real product feature, not a checkbox

Gemma 4 supports variable aspect ratios and configurable visual token budgets: 70, 140, 280, 560, and 1120 tokens per image. Google explicitly recommends lower budgets for classification, captioning, and video understanding, and higher budgets for OCR, small text, and document parsing.

That kind of knob matters in production. You do not always want the best possible visual fidelity. Sometimes you want faster inference, lower memory pressure, or the ability to process many frames. Gemma 4 treats multimodality as a tunable systems parameter, which is exactly the right design.

5) Native tool use and thinking mode make it easier to build agents

Gemma 4 includes native function calling and structured tool use. Google also exposes a configurable reasoning mode through its prompt format, with standard system, assistant, and user roles and an enable_thinking option in the Hugging Face flow. The result is a model family that fits modern agent stacks much more naturally than older prompt-only open models.

That matters if you are building not just chat interfaces, but real orchestration systems. It is the same design direction I described in my post on building a practical multi-agent LLM architecture: once you move from one-shot prompts to tool-mediated workflows, clean interfaces matter as much as raw model quality.

Benchmarks: strong, but read them correctly

According to Google’s official benchmark table, Gemma 4 31B scores:

- 85.2 on MMLU-Pro

- 89.2 on AIME 2026

- 80.0 on LiveCodeBench v6

- 84.3 on GPQA Diamond

- 76.9 on MMMU Pro

The 26B A4B model is close enough to be strategically significant:

- 82.6 on MMLU-Pro

- 88.3 on AIME 2026

- 77.1 on LiveCodeBench v6

- 82.3 on GPQA Diamond

- 73.8 on MMMU Pro

The smaller models are obviously not in the same tier, but they are better than most people intuitively expect from models designed for edge deployment.

Google also states that, as of April 1–2, 2026, Gemma 4’s larger checkpoints were highly placed on Arena AI’s text leaderboard, and that the Gemma ecosystem has passed 400 million downloads and 100,000 variants. Those numbers matter because they show Gemma is no longer a side project. It is a real open ecosystem with deployment momentum.

Still, benchmark charts should be read with discipline. The right conclusion is not “Gemma 4 dominates every open model.” It does not. The right conclusion is that Gemma 4 sits on a very strong Pareto frontier for quality, local deployability, multimodal utility, and licensing clarity.

Where Gemma 4 sits in the current state of the art

To understand Gemma 4, you have to compare it to the current open-model frontier rather than to generic 2024-era open models.

DeepSeek-R1: still a major reference point for open reasoning

DeepSeek-R1 remains one of the most important open reasoning releases in the market. Its published specs list 671B total parameters, 37B activated parameters, and 128K context. More importantly, DeepSeek’s public materials frame R1 around a training recipe that combines reinforcement learning and supervised fine-tuning stages for reasoning-heavy performance.

That still makes DeepSeek-R1 a defining reference point on reasoning. Gemma 4 is not trying to beat DeepSeek-R1 by sheer scale. It is trying to win on a different axis: intelligence per parameter and realistic deployment.

Qwen3.5: a stronger push toward agent-native multimodality

Alibaba’s official Qwen3.5 announcement and the Qwen3.5 GitHub repository position the flagship Qwen3.5-397B-A17B as a native multimodal model for real-world agents. Public model materials also emphasize 201 languages and dialects and a strong agentic/coding profile.

I recently wrote about that release in my breakdown of Qwen 3.5 VLM. The comparison is useful: Qwen3.5 feels like a larger, more explicitly “agent-native” frontier play; Gemma 4 feels like a more operationally deployable family across edge and local hardware.

Llama 4: context-length ambition and ecosystem gravity

Meta’s official Llama 4 materials position Scout around multimodality, strong efficiency, and a 10M-token context window. Whether or not context length is the decisive feature for most teams, it shows where Meta is pushing its open ecosystem: large multimodal models with huge context ambitions.

Against that backdrop, Gemma 4 looks deliberately more balanced. It is not trying to be the biggest context monster. It is trying to be the model family you can actually run across devices and local workflows.

The best Gemma 4 use cases

1) Local coding and software agents

This is the most obvious one. Google explicitly positions Gemma 4 for IDEs, coding assistants, and agentic workflows. The ecosystem support is also unusually strong on day one: Google highlights compatibility across Hugging Face, Transformers, TRL, Transformers.js, vLLM, llama.cpp, MLX, Ollama, NVIDIA NIM, SGLang, Keras, and more.

That makes Gemma 4 especially interesting for developers who want private code generation, local bug triage, repo search, and structured tool-calling loops without routing everything through a hosted API.

2) Document intelligence and multimodal knowledge work

Gemma 4 is also a strong fit for contracts, PDFs, dashboards, internal wikis, scanned documents, screenshots, charts, and operational documentation. Variable image resolution, OCR-friendly token budgets, long context, and multimodal prompting make it a practical document model rather than just a chat model with image input.

For enterprise teams, this is a bigger deal than it sounds. Private document intelligence is one of the clearest reasons to prefer open local models over API-only systems.

3) On-device assistants and edge AI

This is where Gemma 4 may end up being most differentiated. Google’s edge announcement explicitly frames Gemma 4 as a family for on-device AI, with multi-step planning, autonomous action, offline code generation, and audio-visual processing on local hardware.

That opens obvious product categories:

- offline mobile assistants

- privacy-preserving voice interfaces

- camera-grounded field tools

- industrial handheld copilots

- smart-home assistants

- accessibility tools

- embedded operator support systems

It is also why Gemma 4 belongs in the same conversation as my guide to turning a Jetson Orin Nano into a physical AI agent with OpenClaw. The point is not that Gemma 4 is the only model for edge AI. The point is that it meaningfully lowers the gap between “interesting release” and “deployable local component.”

4) Sovereign and local-first AI

Apache 2.0, local execution, broad tooling, and multilingual support make Gemma 4 naturally attractive for organizations that care about sovereignty, compliance, and operational control. Government systems, regulated industries, defense-adjacent workflows, and privacy-sensitive internal tools are all obvious candidates.

In that sense, Gemma 4 is not only a model release. It is also a licensing and governance release.

Gemma 4 for robotics and cyber-physical systems

This is where hype needs to become systems thinking.

Gemma 4 is not a vision-language-action model. Its interface is multimodal input to text output. That makes it fundamentally different from systems like RT-2, which map vision and language into robot actions, or OpenVLA, which is a 7B open-source VLA pretrained on 970k robot episodes for generalist manipulation.

So, if we are being precise, Gemma 4 is not a robot control model in the same category as RT-2 or OpenVLA.

But that does not make it irrelevant to robotics. Quite the opposite.

If you have read my posts on world models in robotics and on vision-language-action models, you already know that the most credible architecture for embodied AI is becoming increasingly layered:

- perception and state estimation

- semantic reasoning and task interpretation

- planning and tool selection

- action generation and control

- safety, verification, and recovery

Gemma 4 fits naturally into layers 2 and 3.

It can read manuals, inspect operator interfaces, interpret natural-language commands, summarize camera-grounded context, extract structured steps from maintenance procedures, classify anomalies from text-plus-image evidence, and output tool calls or machine-readable plans. That is a very valuable role in robotics.

In practice, the strongest robotics use cases are likely to be:

- operator copilots for robot setup, debugging, and procedure assistance

- maintenance assistants that can read labels, panels, manuals, and screenshots

- GUI and HMI understanding for industrial tools and field workflows

- mission planning from natural language into structured intermediate plans

- ROS / ROS 2 scaffolding for code generation, launch-file assistance, and interface glue

- multimodal troubleshooting using logs, images, documentation, and operator notes together

That is also why I would place Gemma 4 above a stack that looks more like ROS 2 architecture patterns that scale, combined with strong sensor fusion and disciplined real-time Linux. The semantic layer can become much smarter. The rest of the system still has to remain physically credible.

The same is true for cyber-physical systems more broadly. Gemma 4 is promising for:

- documentation-grounded reasoning

- procedure checking

- anomaly triage

- log and screenshot interpretation

- operator support

- maintenance planning

- multimodal supervisory tooling

What it is not is a substitute for control engineering. Once you drop from semantic planning into actuators, timing, control loops, and real-world uncertainty, you are back in the territory of things like PID vs MPC, not just bigger models.

The limits are real

1) Knowledge freshness is still a limitation

Google states that Gemma 4’s pretraining data cutoff is January 2025. So despite the release being new, the model’s built-in world knowledge is not current by default. That means retrieval, tool use, browsing, or external memory still matter for dynamic domains.

2) Multimodality is useful, but still bounded

Audio is only supported on E2B and E4B, not on 26B A4B or 31B. Audio is capped at 30 seconds. Video is capped at 60 seconds. That is enough for many real workflows, but not enough to market Gemma 4 as a universal real-time omni system.

3) Multimodal reasoning is not physical grounding

A model that can read a control panel or summarize a camera scene is not the same thing as a model that understands torque limits, actuator saturation, contact dynamics, calibration drift, or hard timing guarantees.

This is why the future of real robotic systems is hybrid, not monolithic. And it is why the strongest architectures will combine LLMs, world models, VLA components, classical planning, and control-theoretic layers rather than pretending one foundation model replaces all of them.

4) Safety is improved, not solved

Google says Gemma 4 underwent the same security and safety evaluation process as Gemini-class infrastructure. That is good news. But it does not eliminate the need for application-level safeguards, restricted tool access, observability, evaluation, and domain-specific testing. The more physical or high-stakes the system, the less acceptable “the model usually gets it right” becomes.

My take

Gemma 4 does not matter because it is the biggest open-model release on the market. It matters because it is coherent.

The architecture is built around deployability. The model family spans edge devices, workstations, and local servers. The multimodal stack is practical. The licensing finally makes broad downstream use much cleaner. The ecosystem support is already strong. And the 26B A4B checkpoint, in particular, looks like exactly the kind of model builders will actually run rather than just admire.

In the current open-model landscape, DeepSeek-R1 is still a major reasoning reference point. Qwen3.5 is pushing hard toward native multimodal agents. Llama 4 is pushing context length and ecosystem scale. Gemma 4 is taking a different route: frontier-adjacent capability with unusually strong deployability.

For teams building local agents, code copilots, multimodal document systems, robotics assistants, and cyber-physical support layers, that may be the trade-off that matters most.

Key takeaways

- Gemma 4 is one of the strongest open-weight releases so far for real local deployment.

- The 26B A4B model may be the most important checkpoint in practice.

- The small models are genuinely interesting because of PLE, long context, and audio support.

- Gemma 4 is a strong fit for coding, document intelligence, and edge assistants.

- In robotics and CPS, Gemma 4 belongs in the semantic planning and tool-use layer, not the low-level control loop.

FAQ

What is Gemma 4 in one sentence?

Gemma 4 is Google DeepMind’s newest family of Apache 2.0-licensed open-weight multimodal models, designed for local deployment, long context, and agentic workflows.

Is Gemma 4 actually open source?

Google released Gemma 4 under Apache 2.0 and explicitly describes it as the first Gemmaverse release under an OSI-approved Apache license. In practice, the most precise description is “Apache-licensed open-weight models.”

Which Gemma 4 model is the most interesting?

For many real deployments, the 26B A4B model looks like the most interesting checkpoint because it gets close to the 31B flagship on several benchmarks while activating only 3.8B parameters at inference.

Is Gemma 4 good for local coding agents?

Yes. Google is explicitly positioning the larger Gemma 4 models for coding assistants and agentic workflows, with broad day-one support across the main open-source inference and fine-tuning stack.

Is Gemma 4 a robotics model?

Not in the strict sense. Gemma 4 generates text. Models like RT-2 and OpenVLA are built to map multimodal observations into robot actions. Gemma 4 fits better as a planning, interpretation, and tool-use layer inside a robotics stack.

What are Gemma 4’s biggest limitations?

The main limitations are a January 2025 knowledge cutoff, audio support only on the small models, short audio/video input limits, and the fact that multimodal reasoning does not equal physical grounding or safe autonomous control.

Sources and further reading

Official Gemma 4 sources

- Gemma 4 model card — Google AI for Developers

- Gemma releases — Google AI for Developers

- Gemma 4: Byte for byte, the most capable open models — Google Blog

- Gemma 4 — Google DeepMind

- Gemma 4: Expanding the Gemmaverse with Apache 2.0 — Google Open Source Blog

- Gemma 4: Frontier multimodal intelligence on device — Hugging Face

- Bring state-of-the-art agentic skills to the edge with Gemma 4 — Google Developers Blog

Open-model landscape references

- DeepSeek-R1 — official GitHub repository

- Qwen3.5 — official announcement

- Qwen3.5 — official GitHub repository

- Llama 4 — official model page

Robotics references

Related reading on this blog

- Physical AI Explained

- The Real Role of LLMs in a Cyber-Physical System

- Qwen 3.5 VLM just dropped — and it’s a very “agent-native” kind of multimodal

- How I Built an AI Agent Architecture

- How to Install OpenClaw on NVIDIA Jetson Orin Nano

- World Models in Robotics

- How Vision-Language-Action Models Are Revolutionizing Robotics

- ROS 2 Architecture Patterns That Scale

- What Is Sensor Fusion in Robotics?

- Real-Time Linux for Robotics

- PID vs MPC in Robotics