Autonomous navigation is where robotics starts to feel real.

You can have cameras, sensor fusion, TF trees, microcontrollers, and perfectly tuned PID controllers… but until your robot can build a map and navigate autonomously in the real world, you still don’t have a complete robotic system.

That’s exactly where the combination of:

- ROS 2

- Nav2

- SLAM Toolbox

- Jetson Orin Nano

becomes incredibly powerful.

In this article, I’ll explain how these components work together to create a full navigation stack on embedded hardware, while staying practical and realistic for real robots.

This article connects directly with topics I already covered:

TL;DR

slam_toolboxbuilds and maintains a 2D map in ROS 2Nav2performs localization, planning, obstacle avoidance, and autonomous navigation- Jetson Orin Nano is powerful enough for full embedded navigation stacks

- Behavior Trees are the orchestration layer behind Nav2

- TF2 and sensor fusion are critical for stable navigation

What Is Nav2?

Definition

Nav2 (Navigation2) is the official ROS 2 navigation framework used for autonomous robot navigation, path planning, obstacle avoidance, and recovery behaviors.

Nav2 is essentially the evolution of the ROS1 navigation stack, redesigned for:

- ROS 2 architecture

- Lifecycle nodes

- Real-time-friendly execution

- Modular robotics systems

Official documentation:

→ https://docs.nav2.org/

What Is SLAM Toolbox?

Definition

SLAM Toolbox is a ROS 2 package that performs 2D Simultaneous Localization and Mapping (SLAM), allowing a robot to build and update maps while estimating its own position.

It supports:

- Online asynchronous SLAM

- Localization mode

- Lifelong mapping

- Pose graph optimization

Official documentation:

→ https://github.com/SteveMacenski/slam_toolbox

Why Nav2 + SLAM Toolbox Works So Well

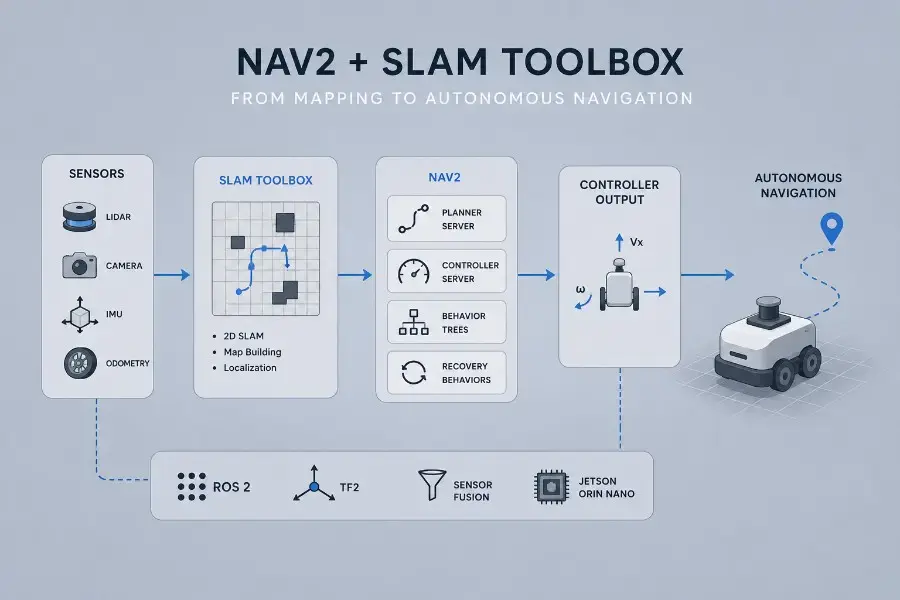

Together, they provide the complete autonomy pipeline:

Sensors → TF2 → SLAM Toolbox → Map → Nav2 → Robot Motion

High-Level Navigation Architecture

A typical embedded robotics architecture on Jetson Orin Nano looks like this:

Lidar / Camera / IMU

↓

Sensor Fusion

↓

TF2

↓

SLAM Toolbox

↓

Map

↓

Nav2

↓

Behavior Trees

↓

Controller Server

↓

ros2_control

↓

Motors

This is where all the previous robotics concepts finally connect together.

Why Jetson Orin Nano Is Interesting for Navigation

Edge AI + Robotics in One Device

Jetson Orin Nano provides:

- GPU acceleration

- ARM efficiency

- Enough compute for SLAM + Nav2 + perception

- Low power consumption

This allows you to run:

- Navigation

- AI perception

- Sensor fusion

- Local inference

on a single embedded computer.

SLAM Toolbox Explained Simply

What SLAM Actually Does

SLAM solves two problems simultaneously:

- Build a map

- Estimate robot position inside that map

This is difficult because:

- Sensors are noisy

- Odometry drifts

- The environment changes

Core Concepts in SLAM Toolbox

Occupancy Grid Mapping

The world is represented as a 2D grid:

- Free space

- Obstacles

- Unknown areas

Pose Graph Optimization

SLAM Toolbox continuously optimizes robot trajectories to reduce accumulated drift.

This is one of the reasons it performs very well on real robots.

Online Mapping

The robot:

- Moves

- Observes environment

- Updates map continuously

This is the basis for:

- Autonomous exploration

- Dynamic environments

Navigating While Mapping

One of the most useful Nav2 workflows is:

Simultaneous Mapping + Navigation

The robot can:

- Build the map

- Navigate inside the partially known environment

- Continue updating the map

This is extremely useful for:

- Service robots

- Warehouse robots

- Exploration robots

Nav2 officially documents this workflow.

Nav2 Core Components

Nav2 is modular.

Understanding the architecture is critical.

Planner Server

Definition

The planner computes a path from the current robot pose to the goal position.

Typical planners:

- NavFn

- Smac Planner

Controller Server

Definition

The controller computes local velocity commands to follow the path safely.

This is where:

- obstacle avoidance

- trajectory following

- local corrections

happen in real time.

Recovery Behaviors

If navigation fails, Nav2 can:

- Clear costmaps

- Replan

- Rotate in place

- Retry navigation

This is critical for robustness.

Behavior Trees in Nav2

Definition

Behavior Trees are hierarchical decision structures used by Nav2 to orchestrate navigation behaviors.

Official docs:

→ https://docs.nav2.org/behavior_trees/

Why Behavior Trees Matter

Instead of hardcoded logic:

1 | if obstacle: replan() |

Nav2 uses modular trees:

1 | Navigate ├── Plan Path ├── Follow Path ├── Recover if Failure |

This makes behavior:

- modular

- extensible

- maintainable

TF2 Is Critical

If TF2 is wrong:

- localization breaks

- planning fails

- navigation becomes unstable

This is one of the biggest sources of robotics debugging.

See also:

→ ROS 2 Camera Calibration, TF2, and Optical Frames

Recommended Sensors

Lidar

Still the most reliable option for 2D SLAM.

Examples:

- RPLidar

- Hokuyo

- YDLidar

IMU

Improves localization stability.

Wheel Odometry

Essential for short-term motion estimation.

Sensor Fusion Improves Navigation

Combining:

- IMU

- wheel odometry

- visual odometry

with EKF fusion dramatically improves map quality and localization stability.

This becomes especially important on uneven floors or slippery surfaces.

Real-Time Considerations

Navigation is not purely “AI”.

It is deeply connected to:

- deterministic control

- timing constraints

- hardware latency

See:

→ /blog/ros2-control-explained-controller-manager-hardware-interfaces-real-time

and:

→ /blog/linux-real-time-robotics

Typical Navigation Pipeline

Step 1 — Sensor Drivers

Publish:

- lidar scans

- IMU data

- odometry

Step 2 — TF2

Build the transform tree.

Step 3 — SLAM Toolbox

Generate the map and localization estimate.

Step 4 — Nav2

Compute global and local paths.

Step 5 — ros2_control

Convert commands into motor actions.

Common Problems

Bad TF Tree

Most navigation failures are TF-related.

Poor Sensor Calibration

Even small calibration errors can destabilize SLAM.

Weak Odometry

Bad wheel odometry causes:

- map drift

- unstable localization

- navigation oscillations

Why This Stack Matters

Nav2 + SLAM Toolbox is the bridge between:

- ROS 2 theory

- embedded systems

- real autonomous robots

This is where robotics stops being “messaging between nodes” and becomes:

- spatial intelligence

- autonomous movement

- real-world interaction

FAQ

Can Jetson Orin Nano run Nav2 and SLAM Toolbox?

Yes. It is powerful enough for full embedded navigation systems.

Is lidar mandatory?

Not strictly, but it is highly recommended for reliable 2D navigation.

Does Nav2 support behavior trees?

Yes. Behavior Trees are a core component of Nav2 orchestration.

Can SLAM Toolbox run online while navigating?

Yes. This is one of its strongest features.

Is Nav2 real-time?

Partially. Some components can run in real-time-friendly configurations, but the full stack is not strictly hard real-time.

What’s Next

Once navigation works reliably, the next frontier is:

- semantic navigation

- AI-assisted planning

- visual SLAM

- multi-robot coordination

- autonomous exploration

This is where embedded AI and robotics truly start converging.