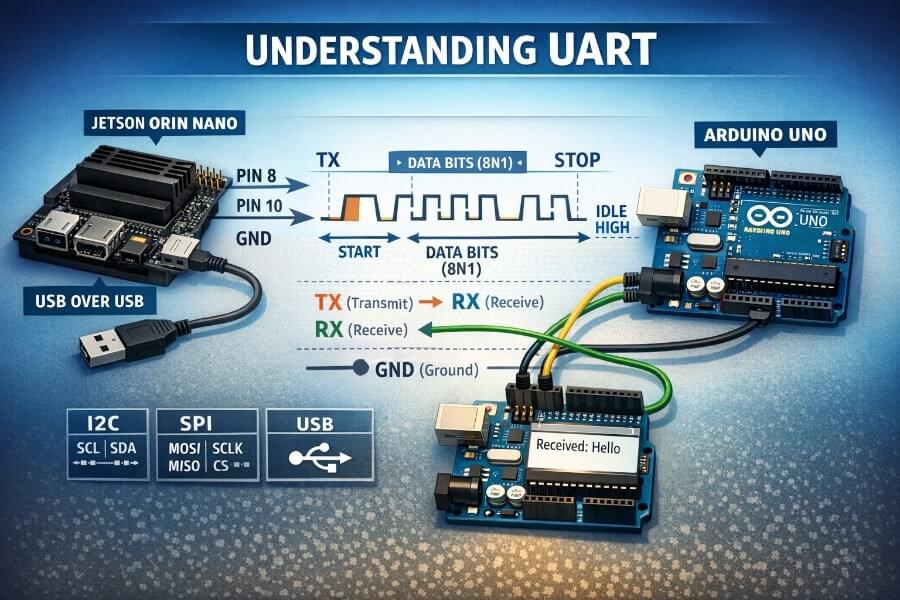

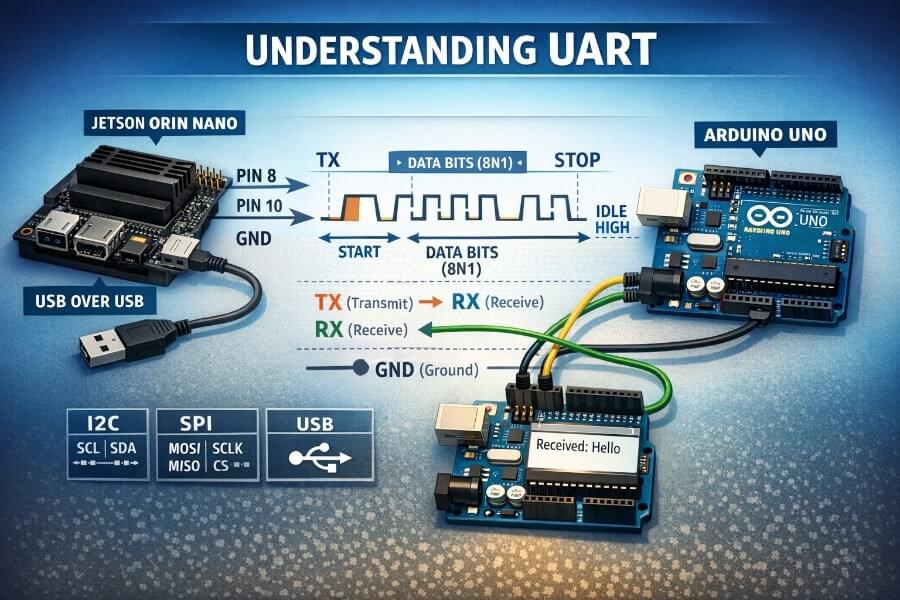

This article is written for anyone who wants a beginner-friendly but technically correct explanation of UART, plus a practical, step-by-step tutorial on Jetson Orin Nano (JetPack 6.x) using:

This article is written for anyone who wants a beginner-friendly but technically correct explanation of UART, plus a practical, step-by-step tutorial on Jetson Orin Nano (JetPack 6.x) using:

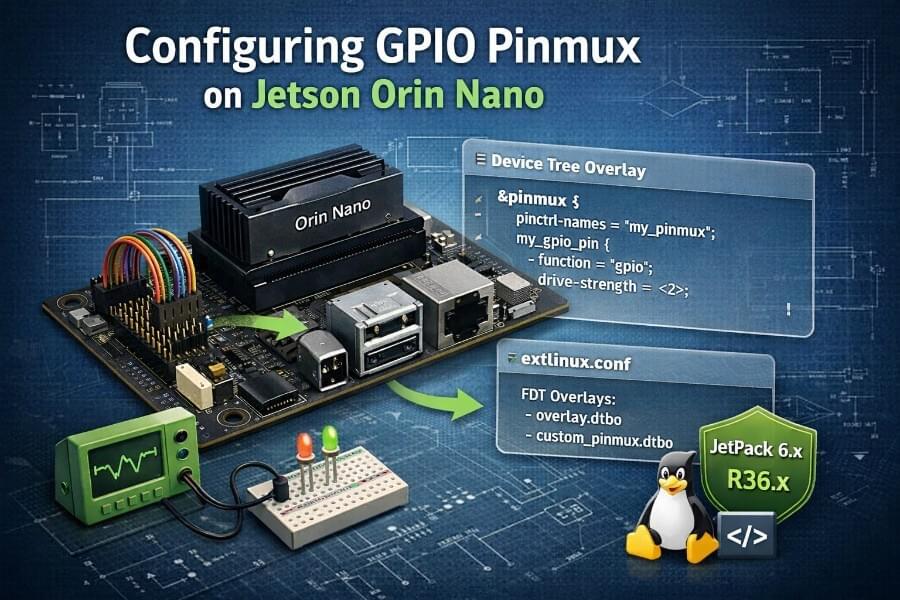

A beginner-friendly “memory dump” with every command explained, end-to-end, reboot-persistent (JetPack 6 / Orin family)

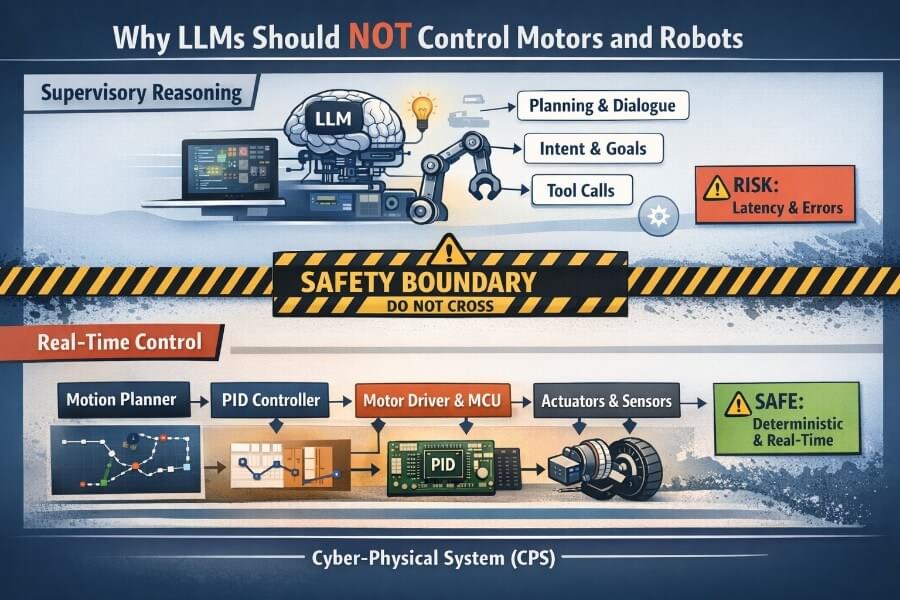

At CES 2026, Jensen Huang spoke extensively about the concept of Physical AI, AI systems that interact with the physical world (robots, drones, embodied AI, etc.). Physical AI is not fully related to LLMs.

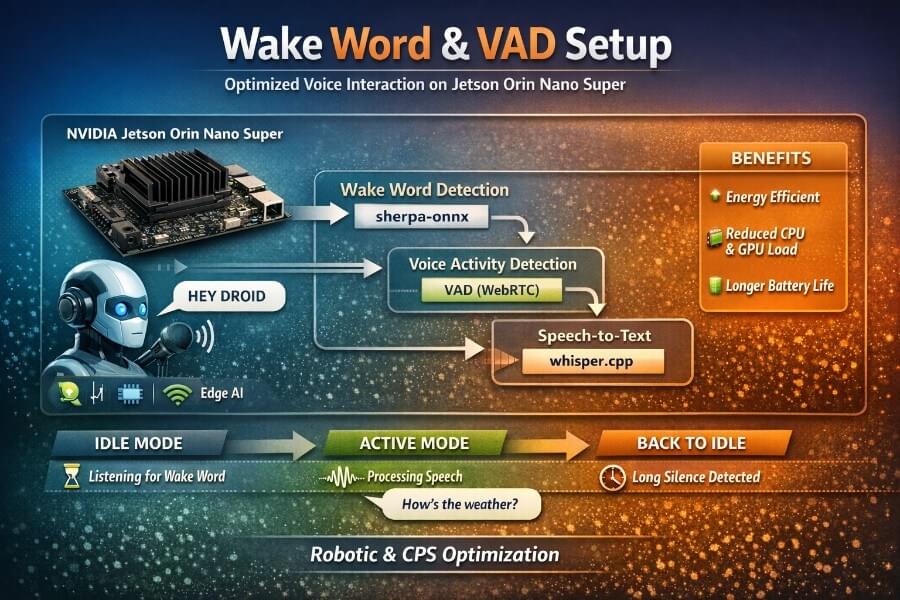

From Trial & Error to a Robust, Energy-Efficient Pipeline on Jetson Orin Nano Super: Building a natural voice interface for a robot or a cyber-physical system (CPS) is deceptively hard. Speech-to-Text (STT) models like Whisper work extremely well, but running them continuously is inefficient, power-hungry, and often unnecessary.

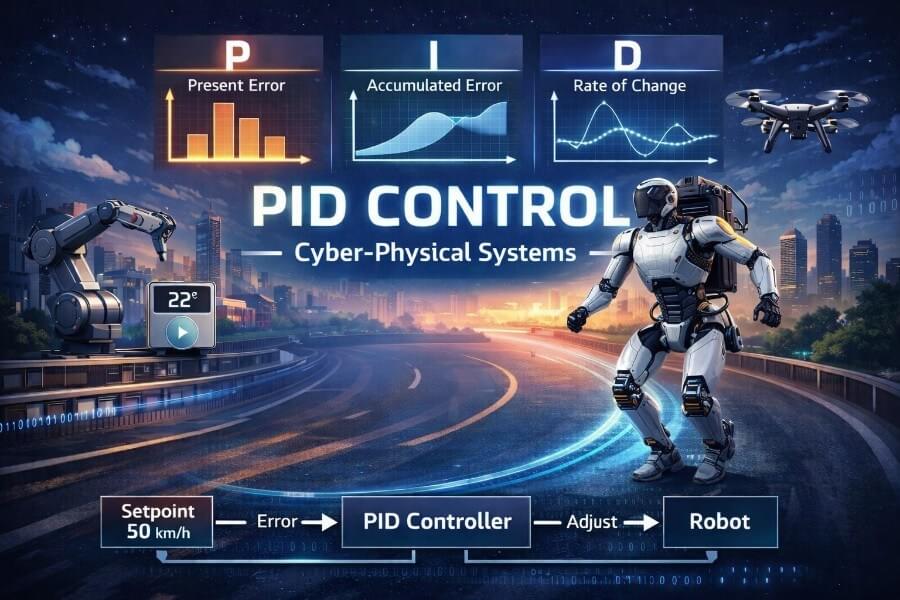

Imagine you are sitting in a car on a long, straight road. You press a button on the steering wheel.

Cruise control activated: 50 km/h

At first, everything feels simple. But then reality kicks in..:

A practical journey from high latency to usable real-time speech-to-text

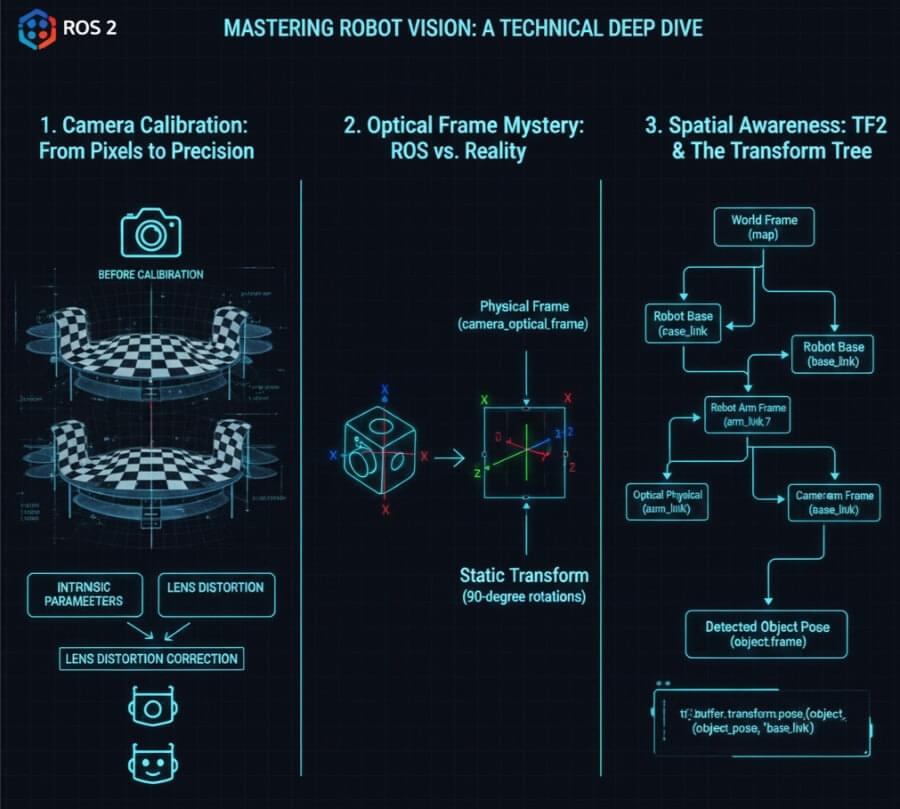

For a robot to intelligently interact with its environment, “seeing” is not enough. It needs to understand where objects are in its physical space. This seemingly simple act of perception is, in fact, one of the most complex challenges in robotics, requiring a precise synergy between camera hardware, software calibration, and a robust spatial representation framework.

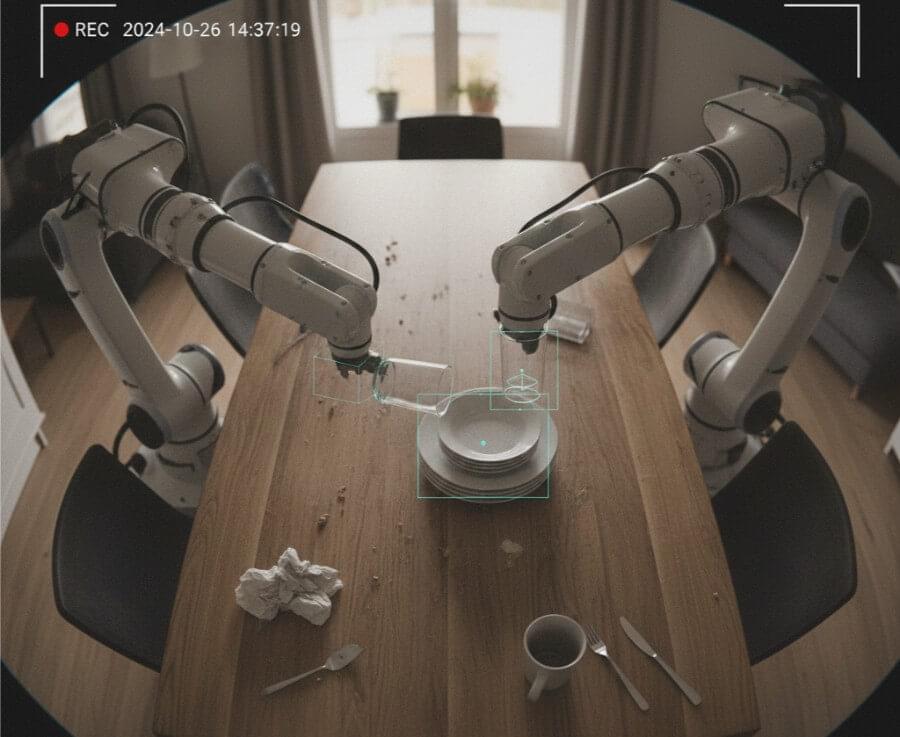

The past few years have witnessed an unprecedented explosion in Artificial Intelligence, driven first by Large Language Models (LLMs) like GPT-3/4, then by Vision-Language Models (VLMs) such as GPT-4V, LLaVA, and Gemini. These breakthroughs have allowed AI to understand and generate human-like text and to interpret visual information with remarkable accuracy.

If you are a web developer or a cloud architect, the world of robotics often feels like a foreign land. We imagine humanoid machines and low-level C code that looks more like math than software. But modern robotics has moved away from monolithic codebases. Instead, it relies on Middlewares, the “glue” that allows different software parts to talk to each other.

The idea of vehicles that drive themselves and cities that flow smoothly is quickly becoming a reality, thanks to the innovative way that Cyber-Physical Systems (CPS) are bringing the physical and digital worlds together.