Artificial intelligence (AI) has rapidly evolved from a field focused on abstract problem-solving and digital environments to one that increasingly shapes our interactions with the physical world. At its core, AI refers to the development of intelligent systems capable of analyzing data, learning from experience, and making decisions—often with minimal human intervention. Traditional AI systems excelled in software domains, such as natural language processing, computer vision, and data analytics, operating primarily within virtual environments.

However, the rise of cyber-physical systems and physical AI systems marks a significant shift. These systems tightly integrate AI models with physical processes, sensor data, and real-world feedback. In physical AI, intelligent agents are no longer confined to digital spaces—they are embodied in robots, autonomous vehicles, and engineered systems that must perceive, reason, and act within complex physical environments. This convergence of machine learning, sensor fusion, and control algorithms enables AI-powered robots to perform tasks independently, adapt to dynamic conditions, and interact safely with humans and other physical systems.

As AI continues to bridge the gap between the digital and physical worlds, the focus is shifting from purely computational intelligence to embodied intelligence—where understanding spatial relationships, recognizing objects, and responding to real-time sensor data are just as critical as processing language or images. This transformation is redefining what it means for machines to be “intelligent” and is driving innovation across industries, from manufacturing and healthcare to transportation and civil infrastructure.

The Real Role of LLMs — and Other AI Models — in a Cyber-Physical Robot

The robotics industry is currently saturated with a seductive metaphor:

“AI is the brain of the robot.”

It sounds intuitive. It makes for good marketing. It simplifies investor decks.

It is also architecturally wrong.

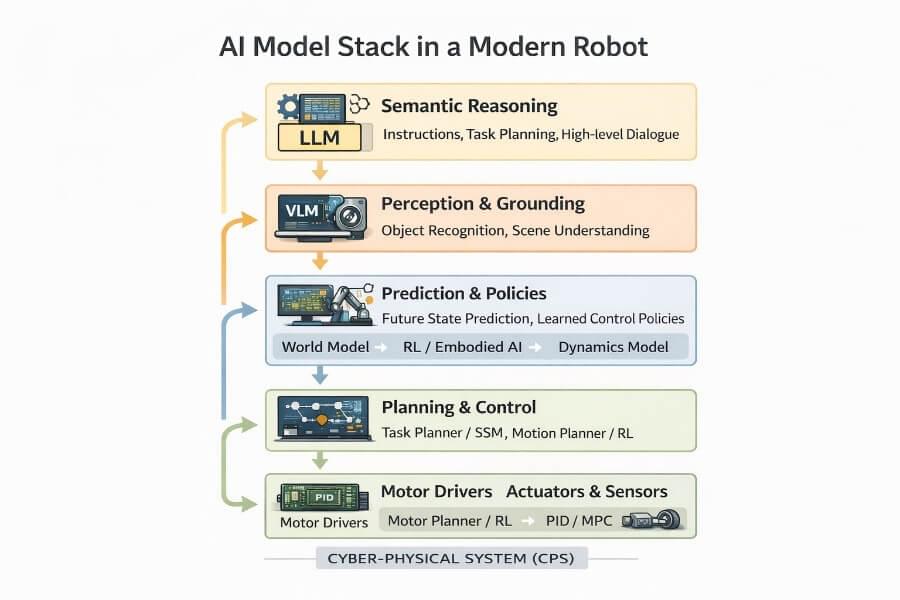

A robot is not a chatbot with wheels. It is a Cyber-Physical System (CPS) — a tightly coupled integration of software, sensors, actuators, control theory, timing constraints, and physical dynamics. At the core of this integration are control systems, which connect AI models with sensors and actuators to enable precise and adaptive operation in the physical world.

Large Language Models (LLMs), Vision-Language Models (VLMs), and Vision-Language-Action (VLA) models have dramatically expanded what robots can understand and reason about. But none of them, individually or collectively, replace the layered intelligence required for stable, safe, embodied systems. Autonomous robots, for example, rely on these integrated control systems to perceive, reason, and act autonomously in real-world environments.

This article will go deep into:

Why an LLM is not a “brain”

The real role of LLMs in robots

How VLMs and VLAs differ

What additional model classes matter in CPS (world models, RL, dynamics models, state-space models, embodied AI)

How these models fit into a robust architecture

Why layered intelligence is non-negotiable

This is not a hype piece. It is an architectural clarification.

1. A Robot Is a Stack, Not a Brain

Human brains integrate perception, reasoning, memory, and motor control in one biological organ.

Robots do not.

A deployable robot separates concerns across layers operating at radically different time scales.

| Function | Robotics Equivalent |

|---|---|

| Reflexes | Firmware, motor drivers |

| Muscle control | PID / MPC controllers |

| Sensor fusion | State estimation |

| Spatial reasoning | Motion planning |

| Task sequencing | Behavior trees / planners |

| Language reasoning | LLM |

| There is no single “brain” module. |

There is a hierarchical CPS stack.

2. What an LLM Actually Is (And Isn’t)

A Large Language Model is:

A probabilistic sequence model

Trained on textual data

Optimized for semantic coherence

Operating in symbolic space

Inside a robot, an LLM is best understood as:

A high-level semantic planner and interpreter.

It is excellent at:

Understanding and generating human language, enabling natural interactions between humans and robots

Understanding human instructions

Resolving ambiguity

Generating structured tool calls

Sequencing abstract goals

Explaining system behavior

It is not:

A real-time controller

A state estimator

A dynamics model

A stability regulator

A motor policy

LLMs operate in abstraction.

Robots operate in physics.

3. Vision-Language Models (VLMs): Semantic Grounding

VLMs extend LLMs by incorporating visual input.

They convert:

1 | pixels → structured meaning |

They are powerful for:

Object identification

Scene description

Contextual understanding

Human-robot interaction

But they still operate at low frequencies (1–5 Hz typical inference rates in robotics settings). They do not stabilize joints or regulate torque.

VLMs add perception to reasoning — not control to physics.

4. Vision-Language-Action Models (VLAs): Toward Embodied Policies

VLA models attempt to map:

1 | (vision + language) → actions |

They are trained on large robotics datasets or simulated trajectories. They can:

Predict next actions

Suggest manipulation sequences

Generate end-to-end policies

This sounds closer to a “motor cortex.”

But VLAs:

Are data-driven approximations

Lack formal stability guarantees

Operate at limited frequencies

Require safety envelopes

Must be wrapped in deterministic execution layers

They can propose.

They should not directly drive actuators.

5. Beyond LLMs, VLMs, and VLAs: Other Critical Model Classes

Modern CPS robotics relies on a broader ecosystem of models.

5.1 World Models — Internal Simulators

World models learn to predict how the environment evolves given actions.

They support:

Counterfactual reasoning

Long-horizon planning

Anticipation of consequences

Instead of reacting blindly, the robot can internally simulate:

“If I push this object, what happens?”

World models are essential for:

Manipulation

Dynamic navigation

Multi-step planning

They add predictive foresight.

5.2 Dynamics Models — Learning Physics

Dynamics models approximate physical interactions:

Object motion

Contact forces

Deformation

Slippage

These can be:

Learned from data

Hybrid physics-informed networks

Combined with classical models

They enable better planning under real-world constraints.

Unlike LLMs, they model Newtonian consequences — not linguistic relationships.

5.3 Reinforcement Learning (RL) Policies

Reinforcement learning learns control policies through trial-and-error interaction (often in simulation).

RL is used for:

Legged locomotion

Grasping

Balancing

Agile maneuvers

RL policies can operate at higher frequencies than LLMs.

However:

They require safety wrappers

They can be brittle outside training distribution

They still sit above firmware-level safety

RL augments control.

It does not eliminate control theory.

5.4 State-Space Models (SSMs) and Temporal Models

Robots process continuous sensor streams:

IMU

Lidar

Torque sensors

Vision sequences

State-Space Models maintain long-term temporal memory efficiently.

They are suited for:

Continuous state tracking

Long-horizon prediction

Persistent environmental understanding

Unlike Transformers, SSMs scale better for continuous dynamics.

They are particularly promising for CPS with long-duration interactions.

5.5 Embodied / Physical AI

“Physical AI” emphasizes:

Learning through interaction

Sensorimotor grounding

Adaptive embodiment

Operating in dynamic environments is crucial, as these settings require real-time perception and adaptation for effective autonomous action.

These systems learn not just from data, but from:

Acting in the world

Observing consequences

Updating internal representations

Physical AI shifts the focus from static reasoning to dynamic adaptation.

But again: adaptation must respect real-time control boundaries.

Physical AI is transforming industries by enabling robots to sense, reason, and act in real-time, which enhances safety, precision, and adaptability across various applications.

6. Time Scales Define Architecture

Understanding CPS robotics requires understanding time.

| Layer | Frequency |

|---|---|

| Current control loop | 1–10 kHz |

| Position control | 100–1000 Hz |

| Motion planning | 10–100 Hz |

| Behavior logic | 1–10 Hz |

| LLM reasoning | 0.1–2 Hz |

| The deeper you go in the stack, the faster and more deterministic it must be. |

LLMs operate at the slowest layer.

They cannot compensate for oscillations in a motor.

7. A Unified Cyber-Physical Systems (CPS) Architecture

A robust architecture integrates multiple model types safely:

1 | Human |

Edge computing plays a crucial role in this architecture by enabling real-time, low-latency processing directly at the device level. This supports immediate decision-making and actuation in autonomous systems, reducing dependence on cloud connectivity.

Physical AI systems require massive amounts of sensor data, 3D environmental models, and real-time information for effective training and deployment.

Each layer:

Operates at different time scales

Has different failure modes

Requires different guarantees

No single model replaces the stack.

8. Why the “AI Brain” Narrative Fails

The brain metaphor implies:

Centralized intelligence

Unified control

Seamless reasoning-action coupling

CPS robotics demands:

Isolation

Validation

Deterministic execution

Formal stability

Safety boundaries

Enforcing safety boundaries requires continuous monitoring to ensure system safety and reliability, especially as AI in robotics operates in complex, real-world environments. AI systems must integrate comprehensive safety strategies that include regulatory compliance, risk assessments, and continuous monitoring to operate effectively in public spaces.

Allowing an LLM or VLA to directly emit motor commands bypasses:

Physical limits

Stability analysis

Safety enforcement

Real-time guarantees

That is not innovation.

It is architectural negligence.

9. The Real Role of Each Model Class

| Model Type | Role in Robot |

|---|---|

| LLM | Intent interpretation, high-level planning |

| VLM | Semantic scene grounding |

| VLA | Action proposal / policy generation |

| World Model | Future state prediction |

| Dynamics Model | Physical interaction modeling |

| RL Policy | Learned control behavior |

| SSM | Long-term temporal memory |

| Classical Control | Stability & actuation |

| The key insight: |

Intelligence in robotics is distributed, layered, and bounded.

Advances in AI subfields are significantly enhancing robotic performance by making robots more intelligent, perceptive, and self-learning. As a result, AI-powered robots are revolutionizing multiple industries through intelligent automation and adaptive decision-making.

10. Applications and Challenges

The convergence of artificial intelligence and physical systems is ushering in a new era of innovation, where cyber-physical systems and physical AI systems are fundamentally changing how we interact with the physical world. AI-powered robots, equipped with advanced machine learning and natural language processing capabilities, are now able to analyze data from a wide array of sensor data sources and make decisions in complex environments. This has enabled the development of autonomous systems—such as self-driving cars and autonomous mobile robots—that can perform tasks independently, often with minimal human intervention.

A central challenge in training physical AI models is the need for high-quality real-world sensor data. This data is crucial for teaching AI models to recognize objects, understand spatial relationships, and navigate the physical environment. However, collecting and processing real world sensor data can be both time-consuming and costly. To address this, researchers are increasingly leveraging synthetic data generation, using simulated environments and virtual testing to accelerate the training of physical AI models. These simulation environments allow for rapid iteration and safe exploration of complex scenarios before deployment in the physical world.

The applications of physical AI are vast and rapidly expanding. In manufacturing, factory robots and robotic arms powered by AI are improving efficiency, quality control, and process control. In healthcare, surgical robots are performing complex procedures with greater precision and reliability. Service robots are being deployed for cleaning, maintenance, and other tasks in public spaces, while humanoid robots are beginning to demonstrate the ability to navigate and interact within complex physical environments. Autonomous vehicles and robots are transforming transportation and logistics, offering the promise of safer, more efficient movement of people and goods.

Despite these advances, deploying physical AI systems comes with significant challenges. Safety and liability are paramount, especially as autonomous vehicles and robots operate in public spaces alongside humans. Cybersecurity is another critical concern, as physical AI systems must be protected against potential attacks that could compromise safety or disrupt operations. Integrating AI-powered robots with traditional mechanical systems and engineered systems requires robust control algorithms, reliable network connectivity, and seamless coordination between software components and hardware platforms.

To overcome these challenges, ongoing research—supported by organizations like the National Science Foundation—is focused on advancing computer vision, machine learning, and natural language processing, as well as developing new architectures for intelligent systems that can operate safely and efficiently in dynamic, real-world environments. The future of physical AI will depend on our ability to design systems that not only perform complex tasks and navigate complex environments, but also prioritize safety, security, and meaningful human interaction.

10. The Future: Hybrid, Not Monolithic

The most promising robotics systems will:

Combine foundation models with predictive world models

Use RL for adaptability

Use classical control for stability

Maintain strict architectural separation

Enforce safety at firmware and hardware levels

Future system design will increasingly rely on systematic data collection from real-world environments to train and improve physical AI models. Training physical AI models requires large, diverse, and physically accurate data about the spatial relationships and physical rules of the real world.

The frontier is not:

“Let the LLM control everything.”

It is:

“Design architectures where each intelligence module does what it is structurally suited for.”

Conclusion

An LLM is not a brain.

A VLM is not a sensory cortex.

A VLA is not a motor cortex.

A robot is not a monolithic intelligence.

It is a Cyber-Physical System composed of:

Semantic reasoning

Perceptual grounding

Predictive modeling

Learned policies

Deterministic control

Hardware-level enforcement

Robots that succeed in the real world will not be the ones that maximize model size.

They will be the ones that respect architecture.

Layered intelligence beats hype every time.