Modern robots rarely fail because one node crashes. They fail because the architecture looked clean in simulation, then became fragile under load: too many hidden couplings, unclear frame ownership, blocking service calls in control paths, impossible startup ordering, or logs and bags that tell you everything except what actually went wrong.

That is why ROS 2 architecture matters more than ever. ROS stands for Robot Operating System, also referred to as robot operating system ros, and serves as an open-source middleware framework for robotics software development. The original ROS provided a flexible architecture and node management, but faced scalability and real-time limitations, which motivated the development of ROS 2 to address these challenges. In 2026, ROS 2 is the mainstream foundation for production robotics stacks, with Jazzy and Kilted as the supported distributions. These ROS distributions represent the evolution from the original ROS to ROS 2, each providing a collection of packages, tools, and core functionalities tailored to advancing robotics needs. The development and ongoing support of ROS and ROS 2 are led by Open Robotics, which plays a key role in maintaining and expanding the ROS ecosystem. The ecosystem around Nav2, MoveIt 2, ros2_control, tracing, and modern bagging tooling has matured enough that the main challenge is no longer “can ROS 2 do it?” but “can your system design survive scale, latency, failures, and long-term evolution?”

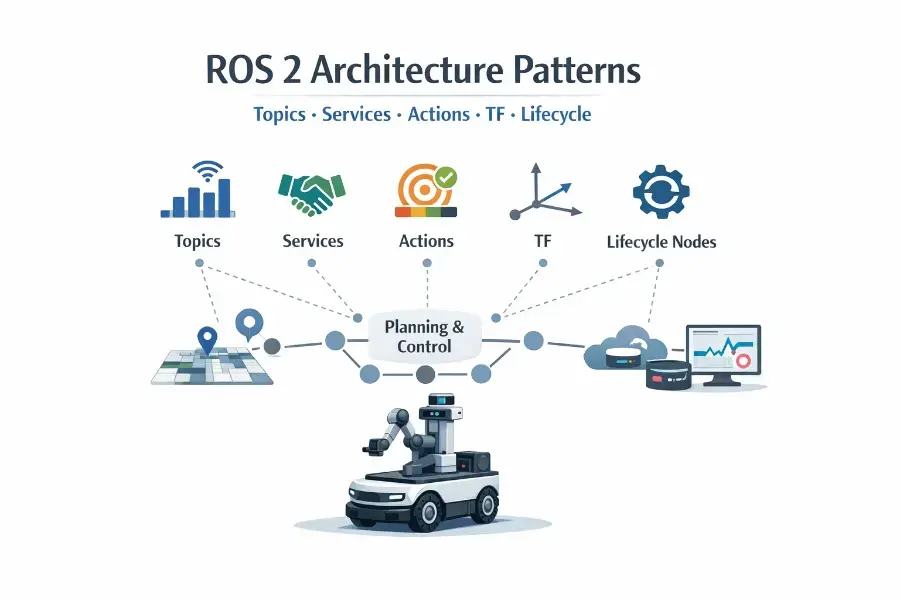

This article is about the patterns that hold up when your robot moves beyond toy demos: how to choose between topics, services, and actions; when lifecycle nodes are worth the operational complexity; how to draw clean TF boundaries; how to decide node granularity; what anti-patterns to avoid; and why observability and rosbag should be designed in from day one rather than added after the first incident.

1. Start from robot operating system architecture, not APIs

ROS 2 gives you nodes, topics, services, actions, parameters, executors, callback groups, QoS, composition, lifecycle management, TF, launch, and tracing. That abundance is powerful, but it also makes it easy to create systems that are locally reasonable and globally chaotic. ROS 2 organizes these primitives into modular units called ros packages, which contain nodes, tools, and configuration to streamline modularity and organization. The scaling question is not “which primitive exists?” It is “which primitive expresses the operational contract of this interaction?”

A scalable ROS 2 system usually has five layers, whether you are building a mobile robot, a manipulator, or a humanoid:

Device and driver layer: cameras, LiDARs, IMUs, motor interfaces, grippers, GPIO, buses.

State estimation and world modeling layer: localization, odometry fusion, kinematics, perception outputs, planning scene, maps.

Behavior and planning layer: navigation, motion planning, task sequencing, behavior trees, recovery logic.

Interaction and mission layer: teleop, fleet APIs, operator UI, voice, cloud interfaces, high-level autonomy.

Operations layer: launch, lifecycle orchestration, diagnostics, logging, tracing, bagging, replay, failure analysis.

These layers are supported by a rich ecosystem of software libraries that provide essential robotics functionality. When creating and managing these systems, the build system is used to configure and build ros packages, ensuring proper integration and deployment.

The communication primitive you choose should match the semantics of the layer and the temporal behavior of the interaction. Topics are for streaming state and events. Services are for short request-response operations. Actions are for long-running goal-oriented tasks with progress and cancellation. TF is for time-aware spatial relationships. Lifecycle is for managing bring-up and shutdown in a deterministic way. Nodes interact with the system through client library and ros client library interfaces, which enable communication between nodes and hardware or software components.

ROS 2 supports the entire development and robotics software development process, providing powerful developer tools and developer tools such as launch, introspection, debugging, visualization, plotting, logging, and playback tools to streamline application creation, testing, and troubleshooting.

ROS 2 is designed for scalability and flexibility, offering cross platform support and compatibility with multiple operating systems to meet diverse robotics needs.

Originally developed as a research platform by PhD students at Stanford University, ROS has evolved into a foundational tool for academic and industry research. The open source robotics foundation plays a key role in supporting and maintaining the ROS ecosystem, fostering collaboration and innovation in the global robotics community.

That sounds obvious, but many ROS 2 systems still mix these responsibilities. The result is not just ugly architecture. It causes deadlocks, lost observability, ambiguous ownership, harder testing, and brittle recovery behavior.

2. Topic vs service vs action: choose by semantics, not convenience

Topics: continuous dataflow, state publication, decoupling

ROS 2 topics are the backbone of the graph. They implement asynchronous publish-subscribe communication, allowing nodes to send messages and receive messages without direct knowledge of each other. This model naturally fits sensor streams, state estimates, commands, detections, status events, and telemetry. ROS 2 documentation explicitly frames topics as the bus for message exchange in modular systems.

Use a topic when at least one of these is true:

the producer emits data continuously or sporadically without knowing who consumes it

there may be multiple consumers now or later

freshness matters more than acknowledgment

replay and recording matter

you want loose coupling between producers and consumers

Examples:

/camera/image_raw

/joint_states

/odom

/cmd_vel

/detections

/battery_state

/mission_events

Topics are used to transmit sensor data, actuator commands, and other message types between nodes. Topics are not just for “big data.” They are the right abstraction for many small events too. A perception node publishing “object detected” or a mission supervisor publishing “dock requested” is often better modeled as a topic than a service, because the event may be consumed by several downstream nodes and you usually want to preserve observability and baggability. Topics create a data trail; services usually do not.

The real design work with topics is not only the message type, which is defined in the source code of your ROS 2 package. It is the QoS contract. ROS 2 exposes QoS policies such as history, depth, reliability, durability, deadline, lifespan, and liveliness, and the defaults are typically keep-last, depth 10, reliable, and volatile for publishers and subscriptions. Those defaults are reasonable for many apps, but they are not universally correct. High-rate sensors often want best-effort, while critical commands or state replication may want reliable. Late-joining consumers may require transient-local durability for selected data, but not for everything.

In practice, scalable topic design means treating each topic as a contract with three dimensions:

Meaning: what the message represents

Timing: expected rate, freshness tolerance, staleness behavior

Delivery: QoS, loss tolerance, late joiner behavior, recording strategy

Topics are configured and managed using configuration files, which help automate node parameters and launch setups. When recording or replaying topic data, the file format (such as ROS 2 bag files) is important for ensuring compatibility and reproducibility in robotic workflows.

A strong rule is this: never create a topic only because “it was easier than defining a service or action.” Topics are best when the communication is fundamentally stream-like or event-like.

Services: synchronous intent, short bounded work

ROS 2 services are request-response, functioning as mechanisms for remote procedure calls (RPCs) between nodes. The client asks for something; the server answers once. The design docs and tutorials position them as the right fit when you need a response but not progress feedback.

Use a service when the operation is:

conceptually short

bounded in time

not something you need to cancel halfway through

not something that benefits from progress feedback

naturally one client to one server

Examples:

resetting an estimator

loading a map

querying calibration metadata

switching controller modes

toggling a debug feature

requesting a one-shot computation with limited cost

Services work very well for administrative and configuration operations, often leveraging the parameter server as a shared database for configuration data accessible by multiple nodes. That is why frameworks like ros2_control expose key management operations as services, while still using lifecycle concepts underneath. Controller Manager, for example, manages controller lifecycles and hardware access while offering services to the ROS world.

But services are widely abused in robotics. A blocking service in a path that needs responsiveness is one of the fastest ways to build a robot that “usually works.” Services are especially dangerous when developers use them to trigger things that are actually long-running behaviors: “go to pose,” “pick object,” “scan room,” “align to dock.” Those are actions, not services.

Another common mistake is using services between layers that should be decoupled by topics. For example, a planner asking a perception server synchronously for “current obstacle list” often creates hidden timing dependencies. Publishing the world model or relevant state on topics is usually cleaner and more observable.

Actions: long-running goals with feedback and cancelation

The ROS 2 action design article is very clear: actions exist for goal-oriented work that takes time, where clients need progress and the ability to cancel. They complement topics and services rather than replacing them.

Use an action when the operation:

has a goal

can run for seconds or minutes

benefits from intermediate feedback

may need cancelation or preemption

returns a meaningful terminal result

Actions are especially suited for long running tasks that involve multiple steps and require ongoing feedback and the ability to cancel, such as navigation or manipulation tasks in robotics.

Examples:

navigate to a pose

follow waypoints

execute a trajectory

move arm to joint goal

run a manipulation sequence

perform hybrid planning

scan an area or patrol a route

This is exactly how advanced stacks behave in practice. Nav2 is built around action servers and behavior logic for navigation tasks, while also relying heavily on lifecycle nodes for system bring-up and robustness. MoveIt 2 interfaces similarly combine topics, services, and actions, and its higher-level interfaces communicate with the move group through that mix.

A good architectural rule is:

topics publish the world as it is

services change configuration or request bounded computations

actions ask the robot to do something that may take time

That separation sounds simple, but it is what keeps navigation, manipulation, and mission systems understandable as they grow.

3. A practical decision framework

When you are unsure which primitive to use, ask these questions in order.

Does the producer need to know who listens?

If no, favor a topic.

Is there exactly one response and no progress?

If yes, consider a service.

Does the task take non-trivial time or need cancelation?

If yes, it is an action.

Would bagging and replay be useful?

If yes, topics often give better operational leverage than a service.

Is the interaction part of the robot’s spatial model?

If yes, it may belong in TF rather than an ad hoc topic.

Is startup/shutdown ordering important?

If yes, lifecycle management may be more important than the communication primitive itself.

That last point is underappreciated. Many architecture failures are not about choosing the wrong interface. They are about failing to define the operational lifecycle around correct interfaces.

4. Lifecycle nodes: when correctness at startup matters

ROS 2 managed nodes, also called lifecycle nodes, define a state machine with transitions such as configuring, activating, deactivating, cleaning up, and shutting down. The design article and lifecycle documentation describe them as a way to control when a node allocates resources, starts processing, stops interfaces, and exits cleanly.

During the configuration phase, lifecycle nodes allow for the adjustment of configurable parameters, which can be used to modify node behavior and performance as needed.

This matters more in robots than in web services. A robot is not just software. It owns cameras, actuators, controllers, maps, transforms, calibration, sometimes safety interfaces, and often hardware that must not start in an undefined state.

Lifecycle nodes are especially valuable when:

publishers should not emit before configuration is complete

subscribers should not process messages until dependencies are ready

hardware interfaces need ordered startup

you want deterministic bring-up and teardown

components may be restarted or replaced online

supervisors or watchdogs need explicit states, not vague logs

That is exactly why lifecycle management is used heavily in Nav2, whose documentation states that lifecycle nodes are used throughout the project and that all servers use them. The lifecycle manager exists specifically to ensure required nodes are instantiated correctly before they execute and to support restarting or replacing nodes online.

Where lifecycle nodes shine

A scalable robot typically has some nodes that should always be lifecycle-managed:

map server

localization

planners and controllers

hardware drivers with expensive setup

perception pipelines that depend on calibration or models

mission-critical bridges to hardware or cloud subsystems

Lifecycle adds discipline. Configuration becomes the phase where you load parameters, validate calibration, allocate buffers, open device handles, or load models. Activation becomes the point where publishers, subscriptions, timers, and command outputs truly go live. Deactivation cleanly stops dataflow without necessarily destroying the node. Cleanup releases resources.

This pattern is far superior to the common anti-pattern of launching everything simultaneously and hoping the dependencies settle in the right order.

Where lifecycle nodes are overkill

Not every node needs lifecycle. A pure visualization helper, a stateless converter, or a simple debug tool may not benefit enough to justify the extra machinery. The point is not to maximize lifecycle adoption. It is to use it where operational state truly matters.

My rule is: if the node’s correctness depends on a preparation phase, external dependencies, resource ownership, or safety-sensitive activation, lifecycle is probably worth it. If it is a tiny stateless data transformer, a regular node may be enough.

A subtle but important insight

Lifecycle is not just about startup. It is an architecture boundary between existence and readiness.

That distinction is gold in production systems. A node process may exist, but not be configured. It may be configured, but not active. It may be deactivated intentionally during a mode switch. Without lifecycle semantics, many systems compress all of that into “the process is running,” which is operationally useless.

5. TF boundaries: spatial truth must have ownership

TF is not a generic messaging convenience. tf2 is a time-aware transform library that maintains relationships between coordinate frames in a tree buffered over time, allowing transformation between frames at desired times. Static transforms have their own correct publication path as well.

This is one of the most important architectural boundaries in ROS 2. A robot’s spatial model should not be fragmented across random topics, duplicated in private conventions, or recomputed inconsistently by multiple nodes.

The first principle: TF is a tree, not a dumping ground

If you put every spatial relation into TF without ownership discipline, you get a tree that technically exists and operationally lies. The question is never “can I publish this transform?” The question is “which subsystem is authoritative for this relationship?”

Good examples:

robot_state_publisher owns kinematic transforms derived from URDF and joint states

localization owns map -> odom or equivalent global drift correction boundary

odometry / base estimator owns odom -> base_link

sensor calibration or static broadcaster owns stable sensor mounting transforms

perception should usually publish detections in a chosen frame, not invent new persistent TF branches for every temporary object

The cleanest TF trees separate stable structure from dynamic state:

static physical mounting relations go to /tf_static

dynamic motion relations go to /tf

ephemeral observations stay as stamped messages unless they truly represent persistent coordinate entities

ROS 2 documentation explicitly distinguishes proper static broadcasting, and tf2’s architecture assumes time-buffered tree relationships, not arbitrary graph chaos.

TF boundaries that scale well

A robust pattern is to define TF ownership at subsystem boundaries:

hardware/kinematics boundary: base_link, links, joints, sensors

local motion boundary: odom to base

global estimation boundary: map to odom

manipulation boundary: tool frames, end effector frames, camera-on-arm frames

external world boundary: AprilTags, docking targets, fiducials, workcells, if persistent enough

This makes frame bugs easier to reason about. If a manipulation pipeline misses the target, you can ask whether the issue is in sensing, calibration, robot kinematics, local motion, or global estimation. If all transforms are published ad hoc by whichever node had the data first, that reasoning collapses.

What not to do

Do not let multiple nodes publish the same transform authority. Do not hide calibration constants inside perception nodes while also publishing conflicting TF. Do not create “convenience frames” everywhere because a downstream consumer wanted a shortcut. Do not use TF as a general blackboard for semantic state.

TF should describe coordinate relationships. Not mission state. Not object classifications. Not business logic.

6. Node granularity: small enough to evolve, large enough to operate

ROS culture often praises modular nodes with single responsibilities, and the docs describe nodes as modular units with a single purpose. That principle is right, but if taken literally it can lead to micro-node sprawl.

At scale, the node granularity question is not ideological. It is operational.

Too coarse, and one node becomes a god process with tangled concerns, long callback chains, impossible testing, and painful restarts.

Too fine, and the graph explodes into dozens of tiny nodes with serialization overhead, launch complexity, harder debugging, more TF owners than necessary, and unclear fault domains.

A better rule: split by fault domain and rate domain

I recommend splitting nodes primarily along four axes:

1. Fault isolation

If one part crashes, should the rest survive?

Example: camera driver and inference pipeline may deserve separation.

2. Rate characteristics

Do parts run at very different rates or latency constraints?

Example: a 400 Hz control loop should not share callback contention with a 5 Hz planner UI bridge.

3. Resource ownership

Does the node own hardware handles, model memory, or lifecycle state?

Example: controller manager or device drivers should often be clear ownership boundaries.

4. Deployment flexibility

Might you compose these into one process later or run them separately depending on hardware?

ROS 2 composition exists precisely because process layout and node boundaries should be separable concerns. ROS 2 docs describe components as the recommended style for common cases and show composition as a way to combine nodes into different process layouts without changing code.

That last point is key. In ROS 2, a good strategy is often:

design logical nodes around coherent responsibilities

implement them as composable components when practical

decide later whether they should run in one process or several

This lets you get modularity without paying every serialization and process-management cost all the time.

A practical example

For a mobile manipulator, a healthy decomposition could be:

hardware interface / ros2_control

low-level controller manager

state estimation

navigation servers

arm planning/execution server

perception server

mission executive

UI/teleop bridge

observability toolchain

Inside perception, however, you may compose camera rectification, pre-processing, and inference in one process for efficiency, especially when intra-process communication matters. ROS 2 has explicit support for composition and intra-process communication for this reason.

Executor and callback-group reality

Node granularity cannot be discussed honestly without executors. Executors are what invoke callbacks for subscriptions, timers, services, and action servers; callback groups control concurrency and help avoid deadlocks in multi-threaded execution.

Many developers split nodes too early when the real problem is callback scheduling. Others keep too much together and then wonder why a slow service callback starves unrelated work.

The scaling lesson is:

first decide logical ownership

then choose process composition

then choose executor model and callback groups carefully

These are separate architectural decisions, and mature systems treat them that way.

7. The patterns used by advanced stacks

You asked for advanced robotics use cases, so it helps to look at how major ROS 2 stacks are organized. ROS 2 enables the development of sophisticated robotic applications and robot applications by providing a robust middleware layer, supporting real-time communication and scalability. This architecture is widely adopted in the robotics industry, powering solutions in autonomous vehicles, sensor fusion, and navigation systems. As robotics technology evolves from prototyping to production, ROS 2 supports the transition to industrial solutions, making it suitable for industry-ready deployments across various sectors.

Navigation stacks

Nav2 uses action servers for navigation goals, lifecycle nodes for bring-up and recovery, and clearly separated servers for planners, controllers, smoothers, routes, and behaviors. This is not accidental. Navigation is a long-running, cancelable, feedback-rich domain with multiple replaceable planners and controllers. Actions fit the task contract; lifecycle fits operational control.

Manipulation stacks

MoveIt 2 exposes interfaces built on topics, services, and actions. That mix reflects the domain: scene updates and joint states are streaming data, planning requests can behave like bounded service-style calls in some contexts, and execution is naturally action-oriented. Hybrid planning adds another layer where online replanning and execution interact continuously.

Control stacks

ros2_control’s controller manager manages controller lifecycle and hardware access while exposing services to interact with the framework. That is a very instructive pattern: lifecycle and services are complementary, not competing choices. Lifecycle expresses internal operational state; services expose bounded control operations to the rest of the graph.

The general lesson is that mature stacks do not try to force one communication primitive everywhere. They compose primitives according to task semantics and operational needs.

8. Anti-patterns that quietly kill scalability

Anti-pattern 1: service-oriented robotics

A surprising number of ROS 2 systems are built like RPC meshes. One node asks another synchronously for state, which asks another, and so on. This feels controlled because each call has an explicit response, but it creates temporal coupling, hidden dependencies, and poor failure behavior.

Robots live in continuous time. Their architecture should expose evolving state as streams wherever possible, not hide it behind synchronous requests.

Anti-pattern 2: actions used as glorified services

An action without meaningful feedback, cancelation, or long-running behavior is often just a misnamed service. This increases complexity without giving operational value.

Anti-pattern 3: giant “brain” node

The monolithic executive that subscribes to everything, publishes everything, owns all timers, performs planning, talks to hardware, and handles UI is easy to prototype and miserable to maintain.

This node becomes impossible to restart partially, hard to observe, and full of accidental shared state.

Anti-pattern 4: frame ownership ambiguity

If two teams publish overlapping transforms or if calibration lives in hidden code instead of well-defined TF/static TF boundaries, your system may look fine until field conditions expose the inconsistency.

Anti-pattern 5: node-per-trivial-function mania

Breaking everything into tiny nodes can make diagrams look elegant while operations become a nightmare. If a simple processing chain requires eight nodes, four launch files, three namespaces, and a doctoral thesis in remapping, the architecture is probably over-fragmented.

Anti-pattern 6: no lifecycle where startup order matters

Launching hardware drivers, planners, controllers, and estimators as plain nodes with no readiness model often creates race conditions that appear only on real hardware or under CPU pressure.

Anti-pattern 7: no observability model

A robot that logs text but does not define what to bag, what to trace, what to visualize, and how to replay incidents is not production-ready. It is just harder to debug.

Anti-pattern 8: abusing TF for semantics

Publishing transforms for every detected person, pallet, or object class as if TF were a semantic world model quickly becomes unmanageable. Use stamped messages for observations; reserve TF for coordinate relationships with real architectural meaning.

9. Observability: the architecture nobody adds early enough

In robotics, observability is not a luxury. It is the only way to understand failures that arise from timing, concurrency, QoS mismatch, dropped messages, stale transforms, startup sequencing, or operator behavior.

ROS 2 has matured significantly here. Beyond traditional logs and CLI tools, the ecosystem now includes ros2_tracing for low-level runtime analysis, Foxglove for visualization and operational inspection, and rosbag2 with MCAP support and evolving timestamp fidelity. Official docs cover tracing and also position Foxglove explicitly as a visualization and observability tool for ROS 2 data. rosbag2’s MCAP plugin is documented, and Jazzy release notes mention middleware send/receive timestamps in recorded bags, with support in MCAP.

For production systems, ROS 2 offers advanced features such as built-in security and access control, ensuring secure communication between nodes through authentication and encryption. These capabilities are essential for developing sophisticated, reliable, and real-time robotic systems.

What to observe in a scalable system

A good ROS 2 observability model covers four layers:

1. Semantic state

Mission state, current goal, planner outcome, controller mode, fault flags.

2. Dataflow state

Topic rates, dropped messages, queue behavior, action status, service latencies.

3. Temporal behavior

Callback duration, executor contention, scheduling jitter, end-to-end latency.

4. Spatial consistency

TF tree validity, missing transforms, timestamp skew, calibration sanity.

Logs alone mostly cover the first layer. rosbag helps with the first and second. tracing helps with the third. TF introspection and visualization help with the fourth.

Why rosbag should be part of the design

rosbag is often treated as a debugging afterthought, but it is really an architectural feature. Topics that are easy to bag and replay are easier to test, easier to simulate, easier to reproduce, and easier to compare across software versions.

If you design key interfaces around topics, you gain:

reproducible field incident capture

offline regression testing

simulation input generation

deterministic comparison of algorithm versions

shared artifacts between robotics, perception, and controls teams

This is a major reason not to hide important state behind services.

Modern rosbag practices

rosbag2 supports MCAP through a storage plugin, and snapshot mode allows in-memory circular buffering until an explicit trigger writes data to disk. That makes it especially useful for incident capture in deployed robots where writing everything all the time is too expensive. Official docs and repo documentation describe snapshot mode, and Humble release documentation highlights it as useful for incident recording.

A practical pattern for field robots is:

continuous lightweight logging

selective topic recording for critical state

snapshot-mode ring buffer for pre-failure context

full bag capture on detected fault or operator trigger

standardized replay scenarios in CI for major regressions

That is how robotics teams move from “we saw something weird once” to “we can reproduce it and compare fixes.”

Tracing matters when topics are not enough

There is a whole class of failures that bags do not explain well:

callback starvation

executor contention

service deadlocks

unexpected action latency

middleware scheduling effects

high-frequency jitter

ROS 2 tracing exists exactly for this level of analysis. The official tutorial shows how to trace and analyze callback durations, and executors are explicitly documented as the mechanism that dispatches callbacks across subscriptions, timers, services, and actions.

If your robot has hard latency requirements, tracing should be part of your architecture validation, not a desperate move after deployment.

10. A reference architecture that scales

Here is a pattern I like for advanced robots.

Layer A: hardware and control

drivers

ros2_control hardware interface

controller manager

joint state publishing

actuator safety status

Use lifecycle where hardware setup and activation matter. Use services for bounded administrative control. Publish state and telemetry on topics.

Layer B: estimation and TF

IMU fusion

wheel odometry or visual odometry

localization

robot_state_publisher

static transform publishers

Define explicit TF ownership. Publish transforms only from authoritative sources. Use topics for state estimates and diagnostics.

Layer C: perception

sensor ingestion

synchronization

pre-processing

inference

object tracking

semantic outputs

Compose nodes in-process when performance matters. Publish detections, tracks, and annotated outputs on topics. Avoid stuffing semantics into TF unless the entity is persistent and frame-like.

Layer D: planning and behaviors

Nav2 servers or equivalent

MoveIt 2 execution/planning components

task planner

behavior tree or executive

Use actions for navigation, manipulation, docking, scan routines, recovery behaviors. Use topics for world state. Use services sparingly for reconfiguration or bounded plan queries.

Layer E: mission and interaction

teleop

operator UI

fleet bridge

voice/LLM interface

cloud edge bridge

These nodes should almost never reach directly into low-level hardware by synchronous service chains. They should communicate through explicit topics and actions exposed by the behavior layer.

Layer F: operations

lifecycle manager

diagnostics

topic monitors

TF monitoring

rosbag recorder

snapshot trigger

tracing hooks

visualization

Treat this as a first-class subsystem, not tooling glued on later.

11. The hardest architectural judgment: what should be the API of a subsystem?

A robust ROS 2 subsystem usually exposes three kinds of surface:

state topics: what the subsystem knows or is doing

goal actions: what you can ask it to achieve

admin services: bounded operational controls

When subsystems expose their APIs, nodes advertise their capabilities or services to other nodes on the network. This advertisement allows nodes to be discoverable and enables other nodes to interact with them appropriately within the ROS network.

For example, a docking subsystem might expose:

topics: dock pose estimate, docking state, health, debug metrics

actions: dock, undock, align

services: reload docking map, clear fault, set debug mode

That is a much better contract than either:

“everything is services,” or

“everything is one giant topic soup.”

The best ROS 2 architectures are not the ones with the most nodes or fanciest diagrams. They are the ones whose subsystem APIs mirror how robotics work unfolds in time.

12. Final recommendations

If you want architecture patterns that genuinely scale, I would summarize them like this:

Use topics for the robot’s flowing reality: sensors, estimates, events, telemetry, and state worth recording.

Use services for short, bounded, administrative, or query-like operations.

Use actions for goal-driven behavior that takes time, needs feedback, and may need cancelation.

Use lifecycle nodes wherever readiness, ordered startup, resource ownership, or restartability matter.

Treat TF as the authoritative spatial contract of the robot, with clear ownership boundaries and no semantic abuse.

Choose node granularity based on fault domains, rate domains, ownership, and deployment flexibility, then refine process layout with composition, executors, and callback groups.

Design observability into the architecture: logs, bags, TF inspection, visualization, and tracing each solve different classes of failures.

And most importantly: optimize for systems that can be debugged, replayed, restarted, and evolved. In advanced robotics, that is what “scales” really means.

A robot architecture scales when the 50th node is still understandable, the 5th subsystem owner can reason about boundaries without folklore, the field incident can be replayed from a bag, the startup is deterministic, and the timing bug can be traced instead of guessed. ROS 2 or other optimized version such as Isaac ROS (Nvidia Jetson) gives you the primitives for that. The hard part is using each primitive for what it was meant to express.