If you build robots long enough, you realize something uncomfortable very quickly:

a robot never directly “knows” its own state.

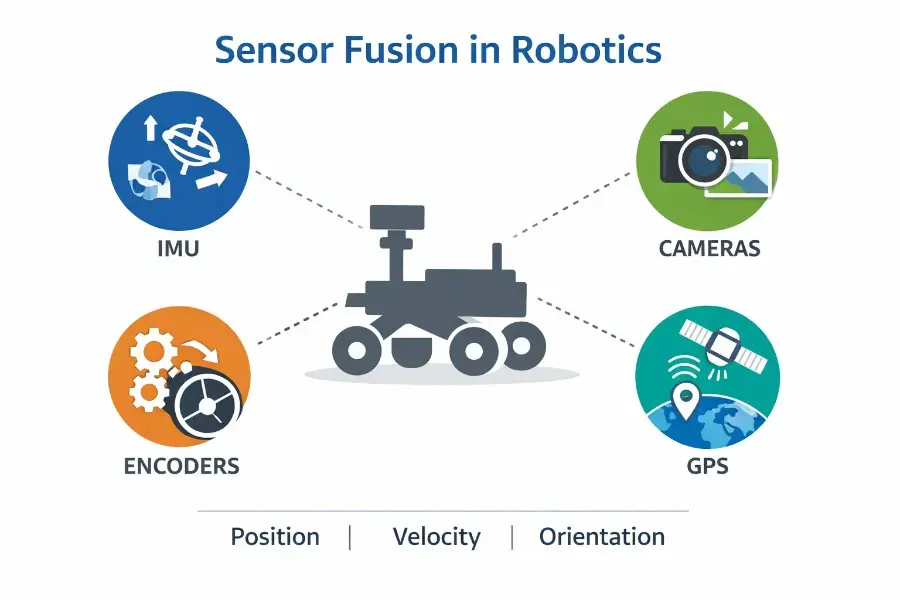

It does not perceive position, orientation, or velocity as ground truth. It only receives fragments of reality.

An IMU gives angular velocity and linear acceleration.

A camera gives visual motion and scene geometry.

Wheel encoders give rotation counts.

GPS gives an outdoor global reference.

None of these sensors tells the full truth on its own.

That is where sensor fusion comes in.

Sensor fusion in robotics is the process of combining multiple imperfect sensor streams into a single, more reliable estimate of the robot’s state. In practice, that usually means estimating pose, velocity, orientation, and sometimes even parts of the environment around the robot.

And this is not just a “nice to have” layer.

Sensor fusion sits at the center of localization, SLAM, navigation, obstacle avoidance, and closed-loop control. If the robot’s estimate of itself is unstable, delayed, noisy, or drifting, everything above it starts to degrade.

So the real job of sensor fusion is not “combining sensor readings.”

The real job is state estimation under uncertainty.

Why Sensor Fusion Matters in Robotics

Every sensor lies in its own way.

That is not a bug. That is reality.

IMUs drift.

Cameras fail in bad lighting.

Encoders slip.

GPS breaks indoors, under trees, near tall buildings, or anywhere multipath becomes ugly.

If your robot depends too much on a single sensor, it will eventually become confident and wrong.

Sensor fusion works because these weaknesses are often complementary.

When the camera loses track, the IMU still gives short-term motion continuity.

When inertial drift starts growing, vision can pull the estimate back toward reality.

When a wheeled robot moves slowly and cleanly on flat ground, encoders are often extremely useful.

When the robot goes outdoors and needs a global reference, GPS becomes critical.

So the goal is not to find the “best” sensor.

The goal is to build an estimator whose blind spots do not all line up at the same time.

That is what makes a robot operationally reliable.

What Sensor Fusion Actually Means

The phrase “sensor fusion” is often explained too loosely, as if the robot simply averages sensor values together.

That is not how serious systems work.

A robot typically defines an internal hidden state such as:

- orientation

- position

- velocity

- IMU biases

- sometimes calibration parameters

- sometimes landmarks or map states

The estimator then does two things repeatedly:

- Predict how the state evolves over time using motion dynamics

- Correct that prediction using incoming sensor measurements

So instead of asking:

“Which sensor is right?”

the system asks a better question:

“What is the most likely robot state given the motion model, the measurements, and the uncertainty of each source?”

That subtle shift matters a lot.

Because in robotics, the challenge is rarely missing data alone.

It is noisy, delayed, asynchronous, partially wrong data arriving from different physical processes at different rates.

Sensor fusion is how we turn that mess into a usable belief.

What Each Sensor Contributes

IMU: The Short-Term Backbone

The IMU is usually the backbone of modern fusion pipelines.

Gyroscopes measure angular velocity.

Accelerometers measure specific force.

The big advantage is that IMUs run fast, often hundreds of hertz or more, and they do not need environmental features to exist. They are always producing motion information.

That makes them perfect for propagating the robot state between slower sensors.

But there is a catch, and it is a brutal one:

IMUs drift.

Even small bias errors grow quickly when integrated over time. Orientation can drift, velocity can drift, and position can become useless surprisingly fast if nothing corrects it.

So the IMU is excellent for short-term continuity, but terrible as a stand-alone long-term truth source.

That is why inertial sensing is powerful only when fused with other modalities.

Cameras: Geometry From the World

Cameras provide something the IMU can never produce by itself:

environmental structure.

A camera can observe landmarks, parallax, scene geometry, and visual motion. That makes it one of the most powerful sensing modalities for visual odometry, SLAM, and mapping.

In many robots, vision is what stops inertial drift from exploding.

But vision has its own fragility.

Cameras want:

- enough texture

- enough light

- enough overlap between frames

- scenes that are not too reflective or transparent

- a world that is not fully dynamic

Once those assumptions break, visual estimation can degrade fast.

Featureless corridors, repetitive patterns, glass, motion blur, fast lighting changes, or crowds of moving objects can all damage camera-based estimation.

So cameras are incredibly informative, but only when the world cooperates enough.

Wheel Encoders: Great Until They Are Not

Wheel encoders are the classic workhorse of ground robots.

They are cheap, fast, simple, and often surprisingly effective over short distances.

On a well-behaved indoor floor, encoder odometry can feel amazing.

But encoder odometry is still dead reckoning.

It assumes that wheel rotation maps cleanly to body motion.

That assumption breaks under:

- wheel slip

- skidding

- backlash

- uneven terrain

- tire deformation

- aggressive turning

- rapid acceleration or braking

This is why encoders often look fantastic in the lab and much worse in the field.

Their main weakness is not random noise.

It is model mismatch.

The wheels turned, yes.

But the robot body did not necessarily move the way the kinematic model assumed.

That is why encoder error accumulates with distance.

GPS and GNSS: The Global Anchor

Strictly speaking, GPS is only one constellation. The broader term is GNSS, which includes GPS and other global navigation satellite systems.

But in robotics, people often say “GPS” as shorthand, and that shorthand is fine in many practical discussions.

What GPS contributes is something dead reckoning never can:

an absolute Earth-referenced position estimate outdoors.

That makes it extremely valuable for long-range navigation, outdoor localization, and any system that needs a globally meaningful frame.

But GPS is not magic.

It degrades under:

- poor sky visibility

- urban canyons

- multipath reflections

- tunnels

- foliage

- indoor environments

- GNSS-denied conditions

So GPS is excellent at preventing unbounded global drift outdoors, but terrible as a universal solution.

In real robots, it is most useful when fused with local smooth sources like IMU, visual odometry, or wheel odometry.

Why Sensor Fusion Works So Well

The whole reason sensor fusion is effective is that the failure modes are different.

That is the key insight.

The IMU gives continuity.

Vision gives geometric correction.

Encoders give practical short-term motion cues on wheeled robots.

GPS gives a global outdoor anchor.

Each one fails.

But they do not usually fail in the exact same way, at the exact same time, for the exact same reason.

And that is enough to build something much stronger than any single modality alone.

Good fusion is not about stacking sensors for marketing.

It is about combining information sources whose weaknesses are misaligned.

Sensor Fusion as a State Estimation Problem

This is where robotics becomes more interesting than the typical simplified explanations.

Sensor fusion is fundamentally a probabilistic state estimation problem.

That means:

- the robot state is hidden

- the measurements are noisy

- the dynamics are imperfect

- the estimator maintains a belief, not certainty

This belief gets updated continuously as new measurements arrive.

In plain terms, the robot is always doing some version of:

- “Here is where I think I am now”

- “Here is how I expect that state to evolve”

- “Here is how the new sensor data changes that belief”

That is why sensor fusion sits so close to localization, odometry, and SLAM.

They are all different ways of solving the same core problem:

estimating state from incomplete evidence.

From Kalman Filters to Factor Graphs

Filter-Based Sensor Fusion

The classical real-time approach is filter-based.

This is the world of:

- EKF

- UKF

- related recursive estimators

The logic is simple:

- predict the next state

- compare prediction against measurements

- update the estimate

- repeat

This is still a very practical architecture, especially for production systems that need deterministic online performance and moderate implementation complexity.

In many real robots, this is the right starting point.

It is simple enough to deploy, understandable enough to debug, and strong enough for a wide range of mobile robotics use cases.

Smoothing and Factor Graph Optimization

But modern high-performance robotics increasingly moves beyond pure filtering.

Why?

Because filters summarize the past, while smoothers keep a window of past states and revisit them as new information arrives.

That is a huge advantage.

A factor graph formulation represents states as variables and sensor relationships as constraints. This allows the system to jointly optimize multiple states together rather than committing too early to a single local estimate.

This matters a lot in visual-inertial odometry and SLAM.

Why?

Because high-rate inertial data, feature tracks, wheel constraints, loop closures, and GPS updates often interact in ways that are better handled through optimization than through a purely recursive filter update.

That is one reason modern visual-inertial systems can be both accurate and robust.

Loosely Coupled vs Tightly Coupled Fusion

This design choice becomes one of the most important architectural splits in robotics.

Loosely Coupled Fusion

In a loosely coupled system, a subsystem solves part of the estimation first.

For example:

- the camera stack outputs visual odometry

- the fusion layer later combines that visual odometry with IMU, wheel odometry, or GPS

This is easier to implement and often easier to integrate.

But it throws away some raw information.

Tightly Coupled Fusion

In a tightly coupled system, rawer measurements enter the same estimator together.

That can include:

- feature tracks from cameras

- preintegrated IMU increments

- wheel motion constraints

- GPS factors

This is harder to build.

But it usually extracts more information and behaves more gracefully when one sensing modality gets weak instead of disappearing entirely.

That is why tightly coupled systems are often closer to the state of the art.

They are more painful to engineer, but more powerful when conditions get messy.

A Practical Sensor Fusion Architecture for Real Robots

For many robots, the cleanest mental model is this:

separate local smoothness from global accuracy.

That distinction is incredibly important.

A controller wants continuity.

It hates jumps.

But operators, maps, and mission planners often need global consistency.

So a good robotics architecture often uses:

- one local estimator for smooth short-term odometry

- one global estimator for world alignment

The local estimator typically fuses things like:

- IMU

- wheel encoders

- visual odometry

The global estimator then adds things like:

- GPS

- landmarks

- loop closures

- absolute pose updates

This separation is not academic.

It is one of the most practical design patterns in mobile robotics.

Because a robot can tolerate local drift for a while.

What it cannot tolerate is a pose estimate that jumps unpredictably during control.

Where Sensor Fusion Breaks in Practice

This is the part many articles skip.

Sensor fusion sounds elegant on whiteboards.

In deployment, it becomes very unforgiving.

1. Observability Problems

Some states simply cannot be recovered from a given sensor suite and motion profile.

If the robot does not move in informative ways, some parameters remain unobservable.

That means the math cannot recover them, no matter how much optimism you inject into the covariance matrix.

This is a brutal lesson for real systems.

You cannot estimate what the sensors and the motion never reveal.

2. The World Is Not Gaussian

Real robotics data is ugly.

Wheels slip.

People move through the frame.

Forklifts block landmarks.

GPS jumps because of reflections.

Terrain changes.

Weather changes.

Noise characteristics shift.

A robot that assumes perfectly stationary noise will eventually become confidently wrong.

That is why robust estimation matters so much.

Not because robustness sounds sophisticated, but because the real world refuses to stay inside the assumptions of neat textbook models.

3. Timing, Calibration, and Frame Mistakes

In practice, sensor fusion failures are often not caused by “bad algorithms.”

They are caused by systems engineering mistakes.

Examples:

- bad extrinsic calibration

- wrong frame conventions

- timestamp offsets

- asynchronous data issues

- poor synchronization

- incorrect sensor mounting assumptions

This is one of the least glamorous truths in robotics:

a mediocre algorithm with excellent calibration and timing often beats a brilliant algorithm with broken integration.

If your timestamps are wrong, your math cannot save you.

If your frames are inconsistent, your filter will lie with confidence.

If your extrinsics drift or were never accurate, the entire fusion stack becomes fragile.

Sensor Fusion in Different Types of Robots

Sensor Fusion for AMRs and Warehouse Robots

In indoor mobile robots, sensor fusion is what turns a rolling platform into something predictable.

Encoders and IMUs provide the local backbone.

Cameras or lidar-based odometry may correct drift.

GPS is usually unavailable.

The practical result is not just better localization on paper.

It means:

- tighter docking

- smoother path tracking

- fewer navigation surprises

- more stable closed-loop control

That is the difference between a robot that demos well and a robot that behaves well all day.

Sensor Fusion for Outdoor Robots and Autonomous Vehicles

Outdoor robots have a different problem.

They need local smoothness and global alignment at the same time.

That is why outdoor stacks often combine:

- IMU

- cameras

- wheel odometry

- GPS/GNSS

- sometimes lidar

The goal is not just localization.

The goal is graceful degradation when GPS becomes intermittent or visual tracking weakens.

That is where multi-sensor fusion becomes mission-critical.

Sensor Fusion for Drones

For drones, visual-inertial fusion is often the core answer.

Why?

Because the sensor suite is lightweight, information-dense, and practical.

A camera plus an IMU can go extremely far in aerial robotics, AR/VR-style motion tracking, and compact autonomous systems.

For many flying robots, that pair is the minimum serious estimation stack.

Sensor Fusion for Legged Robots

Legged robots create a very different estimation problem.

There is no clean wheel model.

Contacts are intermittent.

The body oscillates.

The dynamics are strongly nonlinear.

In these systems, state estimation often relies on combinations of:

- IMU

- joint kinematics

- contact sensing

- vision

- lidar

- sometimes GPS outdoors

Here, sensor fusion is not just helpful.

It is one of the main reasons locomotion remains stable at all.

Sensor Fusion in GPS-Denied Environments

Underground robots, indoor drones, inspection systems, underwater platforms, and search-and-rescue robots often cannot depend on GPS.

In those environments, fusion is not just an enhancement layer.

It is the only path to usable navigation.

That is exactly why visual-inertial odometry, contact-aided estimation, and tightly integrated SLAM systems matter so much.

They allow the robot to navigate even when external positioning infrastructure disappears.

What the State of the Art Is Shifting Toward

Several trends are becoming increasingly clear in robotics.

1. Tighter Integration

Modern systems are moving from loosely coupled pipelines toward tightly integrated estimators that combine rawer measurements more directly.

That increases implementation complexity, but usually improves robustness and information use.

2. Online Calibration

Calibration can no longer be treated as something done once in the lab and forgotten forever.

Real robots experience:

- temperature shifts

- vibration

- mechanical wear

- mounting changes

- timing offsets

So modern systems increasingly estimate calibration online instead of assuming it stays frozen forever.

3. More Robustness to Real-World Drift

The next frontier is not just raw accuracy.

It is resilience under distribution shift.

In other words:

can the estimator stay sane when the environment stops behaving like the data it was tuned on?

That is the real question.

And it matters more than benchmark numbers alone.

4. Learning-Augmented Fusion

The future is probably not pure end-to-end replacement of model-based estimation.

A more realistic direction is hybrid systems where learning helps with:

- denoising

- slip estimation

- calibration correction

- noise adaptation

- feature quality prediction

while a model-based estimator still carries the state.

That hybrid approach preserves physical structure while improving the weak points of classical modeling.

And honestly, that is probably the most practical direction for robotics.

What Sensor Fusion Can and Cannot Do

Sensor fusion can make a robot dramatically more reliable.

But it cannot create information from nothing.

It cannot remove unobservable directions by confidence alone.

It cannot compensate for bad timestamps with elegant math.

It cannot turn a slipping robot on loose terrain into a precision metrology instrument just because an EKF is running.

And it absolutely cannot guarantee robustness just because more sensors were added.

That last point matters a lot.

More sensors do not automatically mean better robotics.

More sensors also mean:

- more calibration

- more synchronization

- more compute

- more integration complexity

- more failure modes

So the best sensor fusion architecture is rarely the one with the longest sensor list.

It is the one that closes the specific failure modes your robot actually has.

How to Choose the Right Sensor Fusion Stack

The right fusion stack depends on the robot and the mission.

For a small indoor wheeled robot, IMU plus wheel encoders plus a solid local estimator may be enough.

For a drone, camera plus IMU is often the real core.

For a legged robot, contact and kinematics may matter just as much as vision.

For an outdoor field robot or autonomous vehicle, GPS or another global reference becomes essential.

That is why sensor fusion is not one algorithm.

It is a design discipline.

It is the discipline of choosing:

- the right sensing information

- the right estimator architecture

- the right assumptions

- the right failure budget

for the environment the robot actually lives in.

Final Thoughts: Why Sensor Fusion Is So Important

The real promise of sensor fusion is not perfect truth.

Robots do not operate on perfect truth.

They operate on belief under uncertainty.

What sensor fusion gives them is something much more valuable than theoretical perfection:

a state estimate reliable enough to keep moving, correcting, and surviving in the real world.

As calibration improves, robust optimization gets better, invariant estimation matures, and learning augments the rough edges of classical pipelines, robots will depend less on any single sensing modality and more on resilient multi-sensor belief systems.

That is the real future here.

Not a robot that sees everything perfectly.

A robot that stays operationally trustworthy even when lighting changes, wheels slip, GPS drops out, and the world becomes inconvenient.

That is what sensor fusion is really for.

FAQ: Sensor Fusion in Robotics

What is sensor fusion in robotics?

Sensor fusion in robotics is the process of combining data from multiple sensors, such as IMUs, cameras, wheel encoders, and GPS, to estimate the robot’s state more accurately and robustly than any single sensor alone.

Why do robots need sensor fusion?

Because no sensor is reliable in all conditions. IMUs drift, cameras fail in poor lighting, encoders slip, and GPS breaks in GNSS-challenging environments. Fusion helps compensate for those weaknesses.

Is sensor fusion the same as SLAM?

Not exactly. Sensor fusion is the broader estimation process of combining measurements to infer state. SLAM is a specific class of problems where the robot estimates both its own state and a map of an unknown environment.

What sensors are commonly used in robot sensor fusion?

The most common ones are IMUs, cameras, wheel encoders, GPS/GNSS, lidar, joint encoders, contact sensors, and sometimes magnetometers.

What is the difference between GPS and GNSS in robotics?

GNSS is the broader term for satellite navigation systems. GPS is one constellation inside that broader category. In practice, many robotics engineers still say “GPS” informally.

What is the difference between loosely coupled and tightly coupled fusion?

Loosely coupled fusion combines subsystem outputs, such as visual odometry plus IMU. Tightly coupled fusion combines rawer measurements inside one estimator, which is harder to implement but often more accurate and robust.