Artificial intelligence in robotics is often associated with large language models, vision systems, or reinforcement learning. But one of the most transformative concepts emerging in modern AI — especially for robots and cyber-physical systems (CPS) — is the world model.

If LLMs help robots reason, and controllers help robots act, then world models help robots anticipate.

They allow a robot not just to react to the world — but to internally simulate it. World models in AI are internal, learned representations of the environment that allow AI systems to simulate, predict, and reason about the consequences of actions. World models are typically implemented as neural networks that understand the dynamics of the real world, including physics and spatial properties, and advances in deep learning have enabled their development. World models are a key stepping stone on the path to AGI, since they allow AI agents to train in an unlimited curriculum of rich simulation environments. They enable systems to ‘imagine’ different scenarios, test actions, and learn from virtual feedback, similar to how a self-driving car practices in a simulator.

This article provides a complete and technically grounded explanation of:

What a world model is

How it works mathematically

How it differs from classical control models

How it integrates into robotics architectures

Why world models are central to modern Physical AI

How they apply to drones, autonomous vehicles, and CPS

We’ll go deep technically — but we’ll also explain everything step by step.

1. What Is a World Model?

A world model is a learned predictive model that approximates how the environment evolves over time.

More formally:

A world model estimates the next state of the world given the current state and an action.

In equation form:

1 | s(t+1) = f(s(t), a(t)) |

Where:

s(t) = current state

a(t) = action taken

f = learned model of environment dynamics

s(t+1) = predicted next state

Unlike reactive systems that wait for new sensor data, a world model allows a robot to:

Predict consequences

Simulate alternative actions

Plan ahead

Avoid costly mistakes

It acts as an internal simulator.

2. Why World Models Matter in Robotics and CPS

Robots operate in the physical world, which is:

Noisy

Nonlinear

Delayed

Partially observable

Dynamic

In cyber-physical systems, actions have irreversible consequences:

A drone tilts and loses stability

A robotic arm knocks over an object

A mobile robot collides

Reactive control is not enough.

To operate safely and intelligently, robots must answer:

“If I do this, what will happen next?”

That question is the essence of a world model.

3. Classical Models vs Learned World Models

Before machine learning, robotics relied on:

Analytical physics equations

Rigid-body dynamics

State-space models

Kalman filters

These are hand-engineered world models.

Example:

1 | x_dot = A x + B u |

Where:

x = state vector

u = control input

This works well for:

Industrial robots

Structured environments

Known kinematics

But real-world environments are messy:

Deformable objects

Friction variation

Uncertain terrain

Human interaction

Learned world models approximate dynamics from data. Building world models for physical AI systems requires extensive data collected from real-world environments, especially video and images from diverse terrains and conditions. Data curation, including filtering, annotation, classification, and deduplication, is a crucial step for pretraining and continuous training of world models.

They do not rely solely on analytical equations.

4. Types of World Models

World models can take several architectural forms.

4.1 Deterministic World Models

Predict a single next state:

1 | s(t+1) = f(s(t), a(t)) |

Used when:

Environment is relatively stable

Uncertainty is low

4.2 Probabilistic World Models

Predict a distribution:

1 | p(s(t+1) | s(t), a(t)) |

Used when:

Noise is significant

Multiple outcomes are possible

Common approaches:

Variational Autoencoders (VAEs)

Bayesian models

Stochastic latent variable models

4.3 Latent Space World Models

Instead of predicting raw pixels or full states, these models learn a compressed representation:

1 | z(t) = Encoder(observation) |

This reduces computational load and allows efficient long-horizon simulation.

This approach is widely used in modern robotics research.

5. How a World Model Works Internally

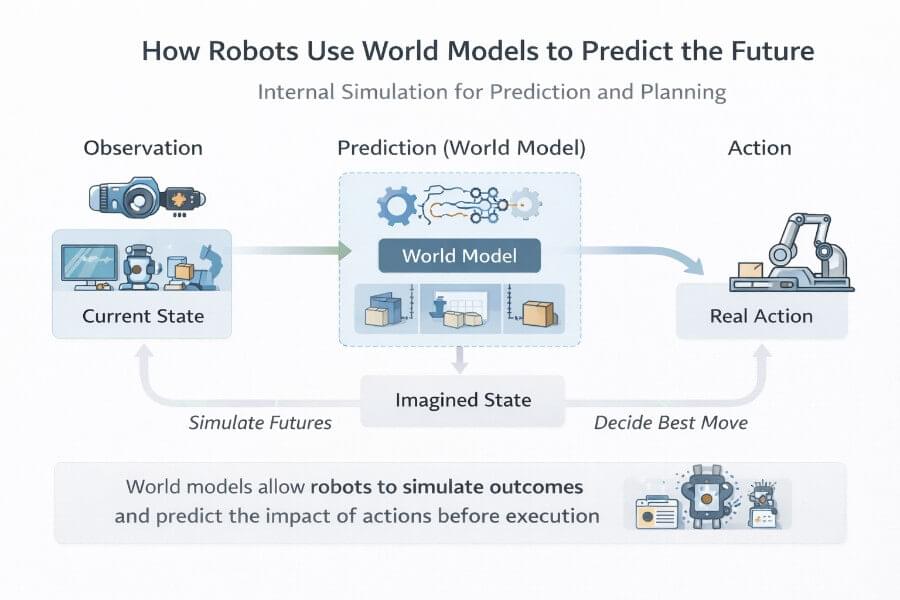

A modern world model typically has three components:

Vision model: Encodes high-dimensional sensory input (like images) into a compact latent representation.

Memory model: Predicts future latent states based on current state and actions, often using recurrent neural networks (RNNs).

Controller model: Selects actions based on the latent state to achieve a goal.

After the pipeline diagram, it’s important to note that internal simulation in world models creates a ‘mental map’ to simulate how the world changes over time. These internal models are crucial for prediction, allowing the agent to anticipate future sensory data and outcomes. The controller model in a world model is responsible for determining the course of actions to maximize the expected cumulative reward during a rollout of the environment.

1. Encoder

Transforms raw observations (camera, lidar, IMU) into a compact state representation.

2. Dynamics Model

Predicts how that representation evolves under actions.

3. Decoder (Optional)

Reconstructs predicted observations.

Pipeline:

1 | Observation → Encoder → Latent State |

This allows:

Multi-step rollout simulation

Planning without acting

Imagined trajectories

6. Model-Based Reinforcement Learning

World models are often used in model-based reinforcement learning (MBRL).

Instead of learning a policy directly from trial and error, the robot:

Learns a world model

Uses the model to simulate trajectories

Optimizes policy inside the model

Executes optimized policy in real world

This dramatically reduces:

Real-world risk

Training time

Hardware wear

This is crucial for drones and legged robots. Some models act as internal simulators for reinforcement learning, enabling agents to predict outcomes of actions, while others focus on maintaining spatial and temporal consistency in their predictions.

Model training for world models often involves an iterative procedure, where the agent explores its world and collects new observations to improve its world model, especially for more complicated tasks.

7. World Models vs LLMs vs VLAs

World models operate in:

Continuous state space

Physical dynamics

Time-sensitive predictions

LLMs operate in:

Symbolic semantic space

Language tokens

Abstract reasoning

VLMs operate in:

- Perceptual-semantic grounding

VLAs attempt:

- Direct action generation

But none of those explicitly model:

Continuous physical state transitions under action.

That is the unique domain of world models.

8. Applications in Robotics

8.1 Manipulation

Predict:

Object slip

Contact forces

Trajectory stability

8.2 Mobile Robots

Simulate:

Future positions

Obstacle movement

Terrain response

8.3 Drones

Predict:

Wind disturbances

Inertial drift

Battery consumption

8.4 Autonomous Vehicles

Model:

Traffic participant behavior

Multi-agent interactions

Dynamic constraints

Autonomous machines, such as autonomous vehicles, use world models to anticipate pedestrian behavior or traffic changes for safer decisions. World models also help autonomous vehicles recognize the behavior of vehicles, pedestrians, and objects more accurately by providing pre-labeled, encoded video data.

9. World Models in Cyber-Physical Systems (CPS)

CPS combine:

Software

Networking

Sensors

Actuators

Physical processes

World models are integral to the development of cyber-physical systems, which are used in various industries including healthcare, manufacturing, and automotive. Cyber-physical systems are designed as a network of interacting elements with physical input and output, rather than as standalone devices. Computational elements, embedded systems, and software components are fundamental to CPS, enabling the integration of physical and software components for intelligent mechanisms and adaptability.

World models enhance CPS by:

Anticipating failures

Optimizing resource usage

Predicting system degradation

Enabling predictive maintenance

In industrial robotics:

World models can forecast:

Wear patterns

Overheating

Structural fatigue

They extend beyond locomotion into system-level prediction.

10. Challenges of World Models

World models are not magic.

Challenges include:

Partial observability

Distribution shift

Compounding prediction errors

Long-horizon instability

Real-time computational cost

Training large models for world models, including neural networks trained on massive datasets, can cost millions of dollars in GPU compute resources.

If prediction error accumulates, simulated trajectories diverge from reality.

Solutions include:

Frequent re-grounding with real observations

Hybrid physics-informed models

Uncertainty estimation

11. World Models and Physical AI

The concept of Physical AI emphasizes:

Embodied intelligence

Prediction under physical constraints

Real-time adaptation

World models are central to Physical AI because they:

Bridge perception and action

Provide anticipatory intelligence

Enable safe planning

They allow robots not just to react — but to imagine. World models extend AI capabilities with deep understanding of spatial relationships and physical behavior in three-dimensional environments.

12. A Complete Robotics Architecture Including a World Model

A modern CPS robot may look like:

1 | Human |

Key components of world models include understanding physical principles and anticipating future states based on current actions.

Each layer has:

Different time constraints

Different abstraction levels

Different guarantees

World models sit between reasoning and control.

They translate high-level intent into physically plausible trajectories.

13. Virtual Environments for Training World Models

Virtual environments have become indispensable tools in the development and training of world models for robotics. By providing a safe, controlled, and highly customizable space, these environments allow researchers and engineers to simulate a vast array of scenarios that would be difficult, dangerous, or costly to reproduce in the real world. This is especially critical for training autonomous systems such as humanoid robots and autonomous vehicles, where real-world errors can have significant consequences.

Within these virtual environments, machine learning algorithms can process high-dimensional visual data, enabling robots to learn from rich, complex sensory inputs. This accelerates the development of artificial intelligence systems that are capable of performing complex tasks, from intricate assembly in manufacturing to delicate procedures in healthcare. By exposing robots to diverse situations and edge cases, virtual environments help ensure that world models are robust and adaptable when deployed in real-world settings.

Moreover, the use of virtual environments streamlines the collection of training data, allowing for rapid iteration and refinement of world models. This not only enhances the efficiency of the development process but also enables robots and other systems to better assist humans in a wide range of applications. As a result, industries such as manufacturing and healthcare benefit from more reliable, intelligent, and efficient autonomous systems, ultimately improving outcomes for both human workers and end users.

14. Integrating World Models into Physical Systems

Integrating world models into physical systems is a cornerstone of modern cyber-physical systems (CPS), where computational and physical elements are deeply intertwined. This integration enables systems to sense, predict, and respond to changes in the physical world with unprecedented accuracy and speed. By combining advanced computational models with real-world physical elements, CPS can achieve higher levels of safety, efficiency, and intelligent decision-making.

In practical terms, this means that world models are embedded within systems ranging from medical monitoring devices to automatic pilot avionics and the infrastructure of smart cities. For example, in medical monitoring, world models help predict patient health trends, enabling timely interventions and improved care. In aviation, automatic pilot systems rely on integrated world models to anticipate and respond to dynamic flight conditions, enhancing both safety and efficiency. Smart cities leverage these models to optimize traffic flow, energy usage, and emergency response, making urban environments safer and more responsive.

The development of such tightly integrated systems requires careful engineering to ensure that computational and physical components work seamlessly together. As world models become more sophisticated, their integration into physical systems will continue to drive advancements in CPS, enabling smarter, safer, and more efficient solutions across a wide range of real-world applications.

13. Why World Models Are the Future of Robotics

Reactive robots are fragile.

Predictive robots are robust.

World models:

Reduce collision risk

Improve energy efficiency

Enable complex manipulation

Support safe autonomy

As robots leave structured factory floors and enter:

Homes

Streets

Warehouses

Construction sites

Prediction becomes essential.

World models are shifting AI toward simulating physical laws and causal relationships, enhancing reliability in dynamic environments and allowing robots to anticipate possible outcomes.

Conclusion

A world model is not a chatbot.

It is not a controller.

It is not a planner.

It is an internal predictive engine.

It allows robots and cyber-physical systems to:

Anticipate consequences

Simulate alternative futures

Optimize actions before execution

In the evolution of robotics AI:

LLMs bring reasoning

VLMs bring perception

RL brings adaptability

Controllers bring stability

World models bring foresight

And foresight is what separates reactive machines from truly autonomous systems.